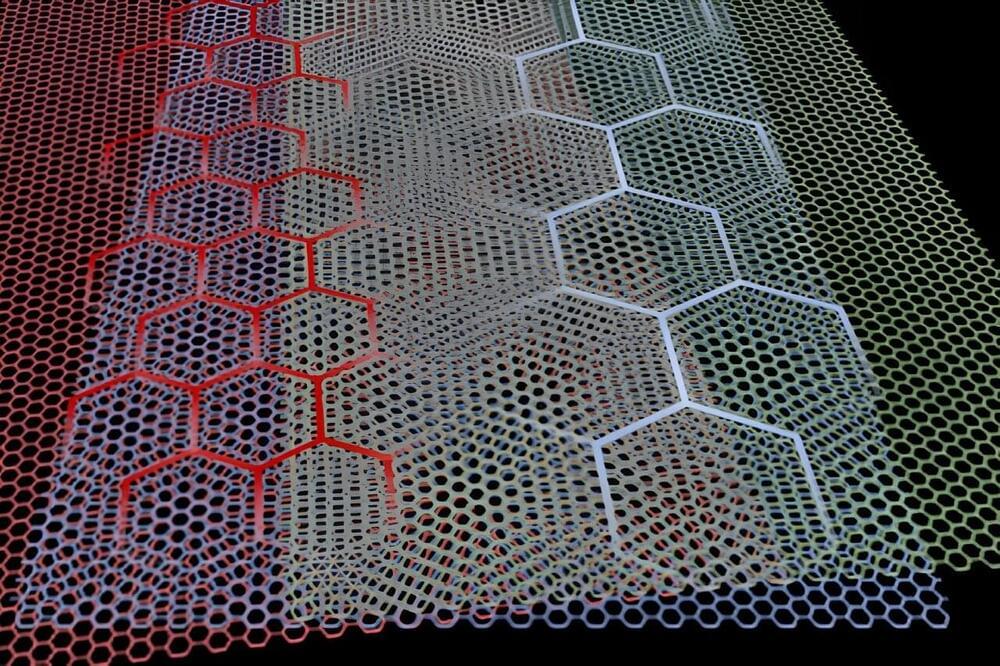

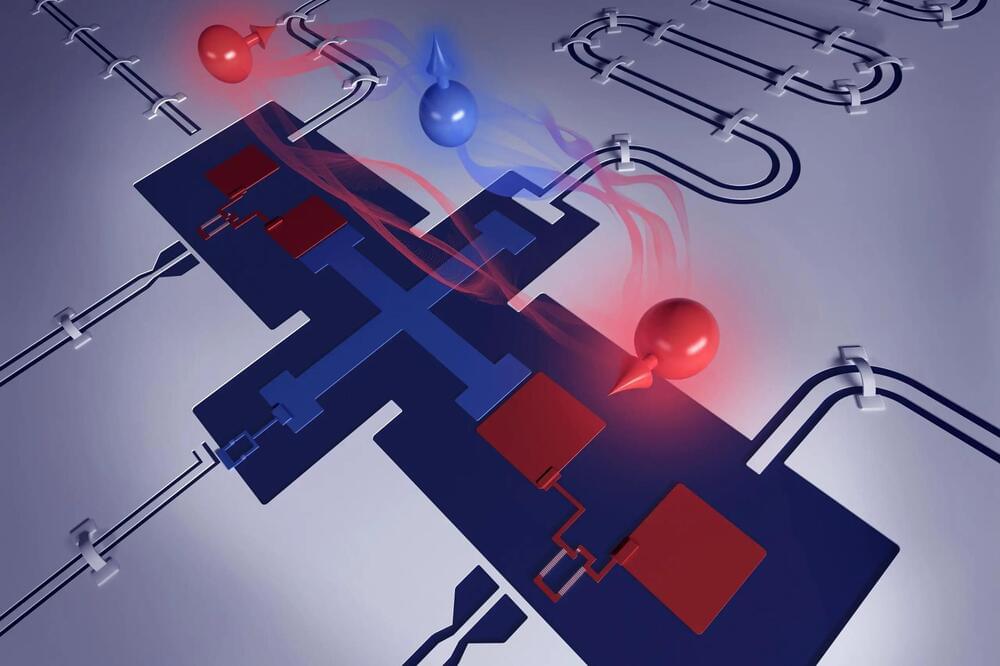

In research that could jumpstart interest into an enigmatic class of materials known as quasicrystals, MIT scientists and colleagues have discovered a relatively simple, flexible way to create new atomically thin versions that can be tuned for important phenomena. In work reported in Nature they describe doing just that to make the materials exhibit superconductivity and more.

The research introduces a new platform for not only learning more about quasicrystals, but also exploring exotic phenomena that can be hard to study but could lead to important applications and new physics. For example, a better understanding of superconductivity, in which electrons pass through a material with no resistance, could allow much more efficient electronic devices.

The work brings together two previously unconnected fields: quasicrystals and twistronics. The latter was pioneered at MIT only about five years ago by Pablo Jarillo-Herrero, the Cecil and Ida Green Professor of Physics at MIT and corresponding author of the paper.