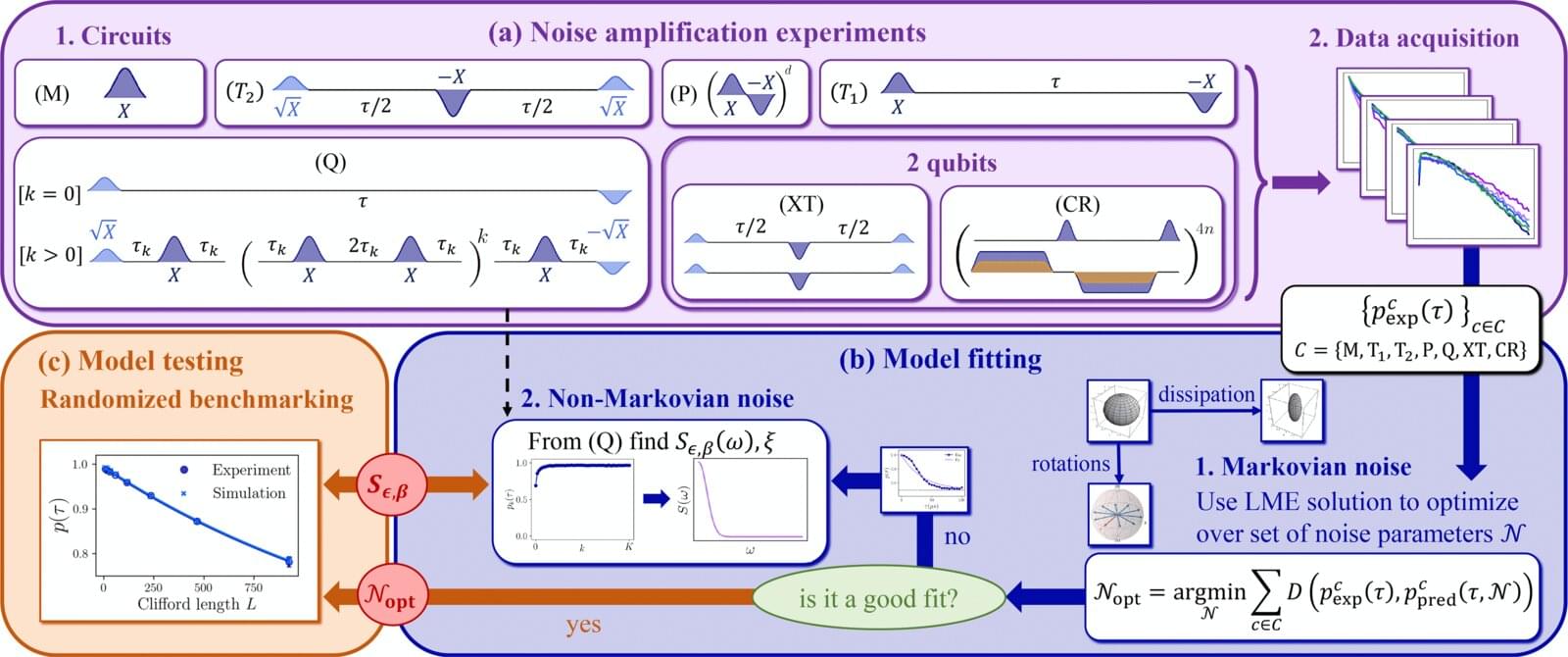

Researchers from the Johns Hopkins Applied Physics Laboratory (APL) in Laurel, Maryland, and Johns Hopkins University in Baltimore have developed a practical, comprehensive noise-modeling framework for a popular class of superconducting quantum processors. Their work, published in the journal PRX Quantum, offers a sevenfold improvement in predictive accuracy over existing approaches.

Quantum bits, or qubits, are intrinsically prone to noise—interference arising from environmental factors such as electrical and magnetic fields or temperature fluctuations—as a result of the extreme sensitivity that makes them so valuable for computing. Developing accurate noise models is key to creating the robust quantum algorithms and resilient error-correction protocols required to build truly fault-tolerant quantum computers.

“To really advance the field, we need models that can predict a wide range of behavior while utilizing a small number of parameters, rather than theoretical models that try to account for all of the fundamental physics at play in quantum interactions,” said project lead Gregory Quiroz, a senior physicist at APL and an associate research professor in the Department of Physics and Astronomy at the Johns Hopkins University Krieger School of Arts and Sciences. “The novelty of our approach lies in a unified and experimentally validated framework that connects multiple noise mechanisms and yields a coherent predictive methodology.”