OpenAI’s first DevDay was jam-packed with updates and announcements. But according to CEO Sam Altman, we’ve yet to scratch the AI surface.

Get the latest international news and world events from around the world.

The B-21 Raider, the Air Force’s new nuclear stealth bomber, takes flight for first time

The B-21 Raider took its first test flight on Friday, moving the futuristic warplane closer to becoming the nation’s next nuclear weapons stealth bomber.

The Raider flew in Palmdale, California, where it has been under testing and development by Northrop Grumman.

The Air Force is planning to build 100 of the warplanes, which have a flying wing shape much like their predecessor the B-2 Spirit but will incorporate advanced materials, propulsion and stealth technology to make them more survivable in a future conflict. The plane is planned to be produced in variants with and without pilots.

Edible electronics: The future of sustainable devices is in your food

A team of researchers from the Italian Institute of Technology has created the first-ever rechargeable edible battery made out of gold foil, nori seaweed, and beeswax. A charger you can eat? Sounds good to us.

The Italian Institute of Technology has really brought innovation to the table at the Maker Faire in Rome. The team of researchers has created the first-ever rechargeable edible battery made out of gold foil, nori seaweed, and beeswax.

“Hostile Architecture” has many purposes, but should it be used against NYC’s most vulnerable?

There are examples of this type of design all over the city, some by private companies, and others by the city itself.

Ruby’s saga — ryan james carr // working for the man — aesyme.

Need Music? Get Epidemic Sound: https://www.epidemicsound.com/referral/18oofi.

Everything used to make this video

[Camera] https://amzn.to/3VBWywO

[Mic] https://amzn.to/3ptHhz3

[Lens] https://amzn.to/3MZGmmF

[Backpack] https://amzn.to/45pHoP1

[Handheld Tripod] https://amzn.to/3iUp3Ex.

[Full Size Tripod] https://amzn.to/3cp2sty.

[Lights] https://amzn.to/3izy3ic.

Great news! If you make a purchase from any link above, my channel earns a small affiliate commission from the site.

Maternal metabolic conditions linked to children’s neurodevelopmental risks, study shows

🤰 🧠 👶

Study investigates how maternal metabolic conditions like pregestational diabetes, gestational diabetes, and obesity mediate the risk of neurodevelopmental conditions in children. It highlights the significant role of obstetric and neonatal complications in this relationship, emphasizing the need for managing these complications to mitigate children’s risk of developing conditions like ADHD and autism.

The Impact of AI on Medical Records — The Medical Futurist

You requested a video exploring the future of medical records, and your wish is our command!

We’re aware that administrative tasks are often the bane of a physician’s work, contributing significantly to burnout. So, let’s embark on a journey together to discover how the future might unfold, and whether artificial intelligence has the potential to lighten this heavy burden.

How Einstein Unlocked the Quantum Universe and Created the Photon

It started with a simple experiment that was all the rage in the early 20th century. And as is usually the case, simple experiments often go on to change the world, leading Einstein himself to open the revolutionary door to the quantum world.

Here’s the setup. You take a piece of metal. You shine a light on it. You wait for the electrons in the metal to get enough energy from the light that they pop off the surface and go flying out. You point some electron-detector at the metal to measure the number and energy of the electrons.

Done.

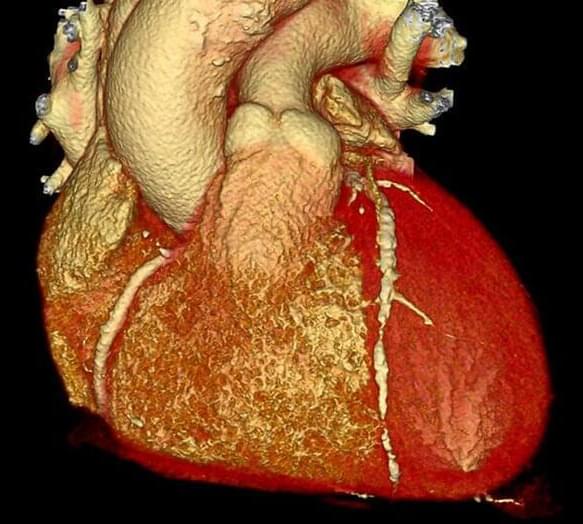

New Gene Editing Treatment Cuts Dangerous Cholesterol in Small Study

So they volunteered for an experimental cholesterol-lowering treatment using gene editing that was unlike anything tried in patients before.

The result, reported Sunday by the company Verve Therapeutics of Boston at a meeting of the American Heart Association, showed that the treatment appeared to reduce cholesterol levels markedly in patients and that it appeared to be safe.

The trial involved only 10 patients, with an average age of 54. Each had a genetic abnormality, familial hypercholesterolemia, that affects around one million people in the United States. But the findings could also point the way for millions of other patients around the world who are contending with heart disease, which remains a leading cause of death. In the United States alone, more than 800,000 people have heart attacks each year.