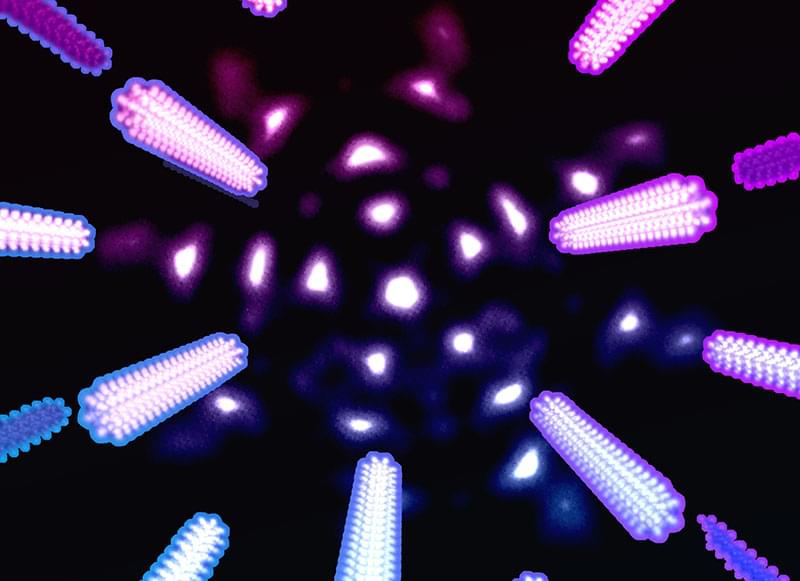

Advanced materials, including two-dimensional or atomically thin materials just a few atoms thick, are essential for the future of microelectronics technology. Now a team at Los Alamos National Laboratory has developed a way to directly measure such materials’ thermal expansion coefficient, the rate at which the material expands as it heats. That insight can help address heat-related performance issues of materials incorporated into microelectronics, such as computer chips.

The research has been published in ACS Nano (“Direct measurement of the thermal expansion coefficient of epitaxial WSe 2 by four-dimensional scanning transmission electron microscopy”).

“It’s well understood that heating a material usually results in expansion of the atoms arranged in the material’s structure,” said Theresa Kucinski, scientist with the Nuclear Materials Science Group at Los Alamos. “But things get weird when the material is only one to a few atoms thick.”