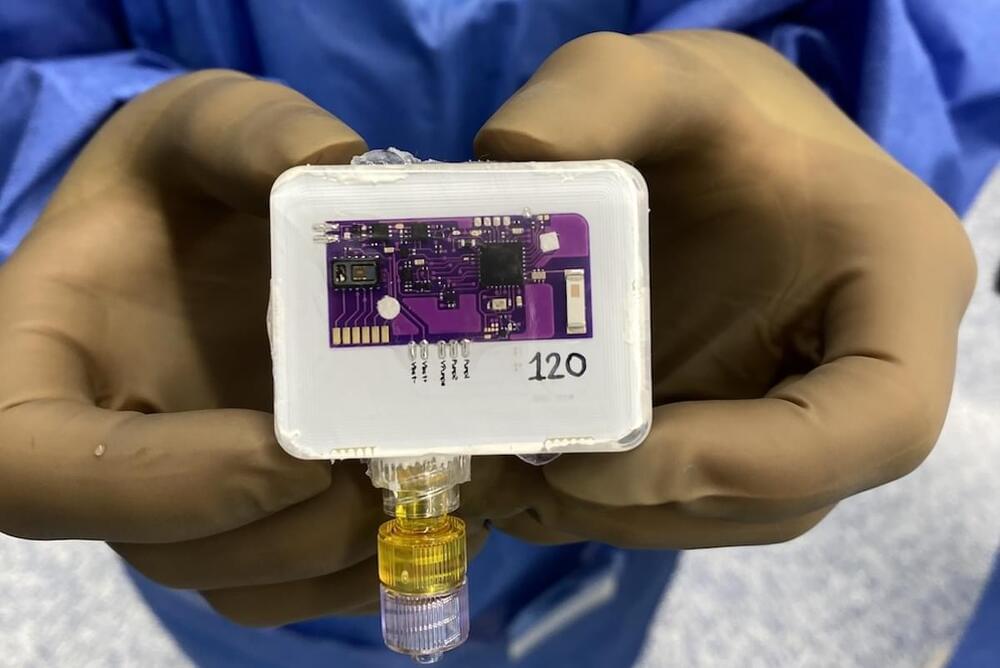

I love the analogy they use here of space flight — a deeply impressive human accomplishment that has, nevertheless, primarily relied on engineering solutions because the science behind it is relatively well understood. It’s a great reminder that BCIs are not “rocket science” because, unlike rocket science, we don’t yet have the science to underpin the engineering that advances will rely on.

Yet despite this, Gordon and Seth throw a bone to engineers who can’t wait for the science to catch up. And they do this by suggesting that artificial intelligence may “soften” if not completely eliminate the science challenges facing the development of successful BCIs.

At this point it’s hard to tell how far AI-driven engineering solutions might support BCIs designed to enhance performance — and Gordon and Seth suggest that near term technologies may be “limited to controlling apps on phones or other similarly prosaic activities”. But they also acknowledge that, in spite of the considerable challenges, BCIs still hold promise for human enhancement in the future.