U.S. Geological Survey says it was magnitude 7.0. No casualties have been reported. On Dec. 22, a tsunami, likely caused by the volcano Anak Krakatau, hit Indonesia and killed about 430 people.

How math helps us solve the universe’s deepest mysteries

One of the great insights of science is that the universe has an underlying order. The supreme goal of physicists is to understand this order through laws that describe the behavior of the most basic particles and the forces between them. For centuries, we have searched for these laws by studying the results of experiments.

Since the 1970s, however, experiments at the world’s most powerful atom-smashers have offered few new clues. So some of the world’s leading physicists have looked to a different source of insight: modern mathematics. These physicists are sometimes accused of doing ‘fairy-tale physics’, unrelated to the real world. But in The Universe Speaks in Numbers, award-winning science writer and biographer Farmelo argues that the physics they are doing is based squarely on the well-established principles of quantum theory and relativity, and part of a tradition dating back to Isaac Newton.

Tidying up our extraplanetary mess is as important a task as cleaning up the Earth. If we don’t, it will become increasingly hard to launch rockets into space.

A team from Cornell is out to prove that water is all you need to send an aircraft flying in space. They will attempt to send a CubeSat, a tiny satellite no bigger than a cereal box, to orbit the moon.

Researchers have explored how memory is tied to the hippocampus, with findings that will expand scientists’ understanding of how memory works and ideally aid in detection, prevention, and treatment of memory disorders.

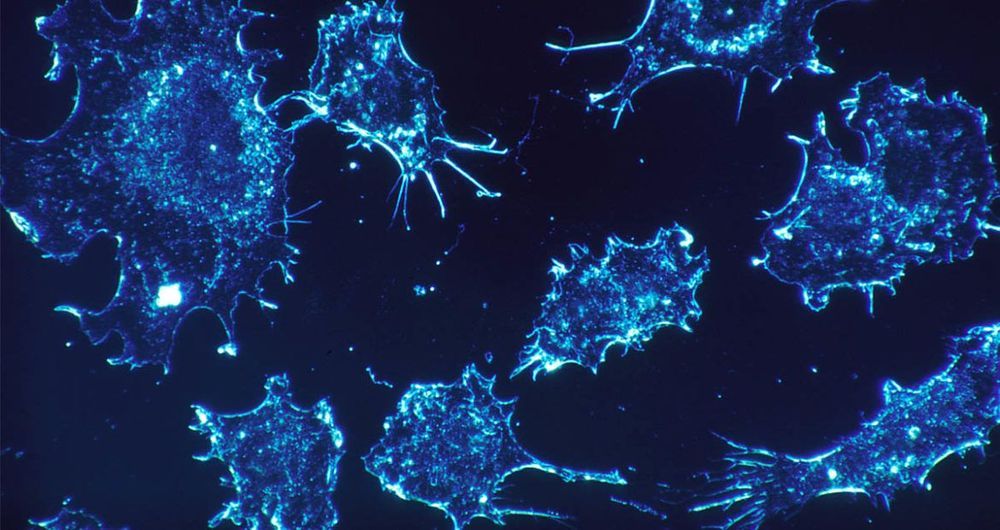

A quick and easy test devised by scientists from the University of Queensland could transform cancer diagnosis as we know it.

Cancer is a difficult disease to diagnose because different types are characterised by different signatures. Until now, scientists have been unable to find a unique signature common to all forms of cancer that would set it apart from healthy cells.

That’s what University of Queensland researchers Dr Laura Carrascosa, Dr Abu Sina and Professor Matt Trau have addressed. They have discovered a unique DNA nanostructure that seems to be common to all types of cancer and is visible when cancer cells are placed in water.

But despite all our advances, we’re not a whole lot closer to creating net-positive nuclear fusion. Put simply, that’s because these machines just take so much energy to generate plasma.

In fact, Wendelstein 7-X isn’t even intended to generate usable amounts of energy, ever. It’s just a proof of concept.

But for years, Hora and her team have been working on alternative designs. And in this study, they tested them out experimentally as well as through simulations.