Sarcasm, a complex linguistic phenomenon often found in online communication, often serves as a means to express deep-seated opinions or emotions in a particular manner that can be in some sense witty, passive-aggressive, or more often than not demeaning or ridiculing to the person being addressed. Recognizing sarcasm in the written word is crucial for understanding the true intent behind a given statement, particularly when we are considering social media or online customer reviews.

While spotting that someone is being sarcastic in the offline world is usually fairly easy given facial expression, body language and other indicators, it is harder to decipher sarcasm in online text. New work published in the International Journal of Wireless and Mobile Computing hopes to meet this challenge. Geeta Abakash Sahu and Manoj Hudnurkar of the Symbiosis International University in Pune, India, have developed an advanced sarcasm detection model aimed at accurately identifying sarcastic remarks in digital conversations, a task crucial for understanding the true intent behind online statements.

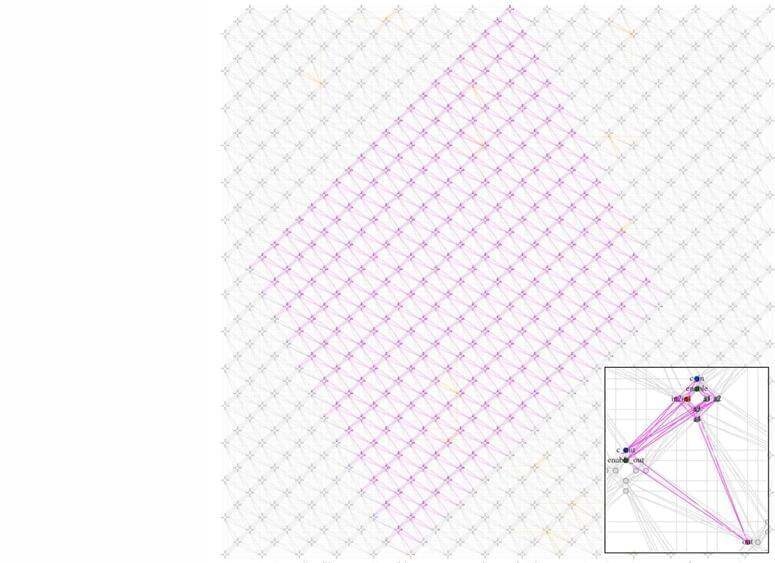

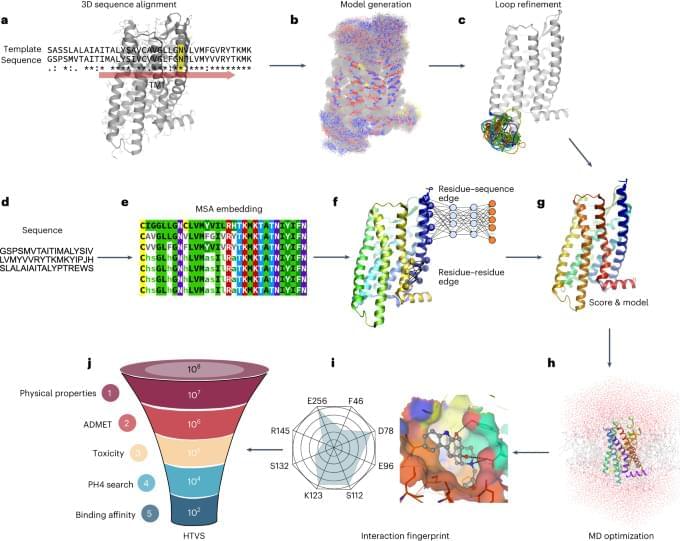

The team’s model comprises four main phases. It begins with text pre-processing, which involves filtering out common, or “noise,” words such as “the,” “it,” and “and.” It then breaks down the text into smaller units. To address the challenge of dealing with a large number of features, the team used optimal feature selection techniques to ensure the model’s efficiency by prioritizing only the most relevant features. Features indicative of sarcasm, such as information gain, chi-square, mutual information, and symmetrical uncertainty, are then extracted from this pre-processed data by the algorithm.