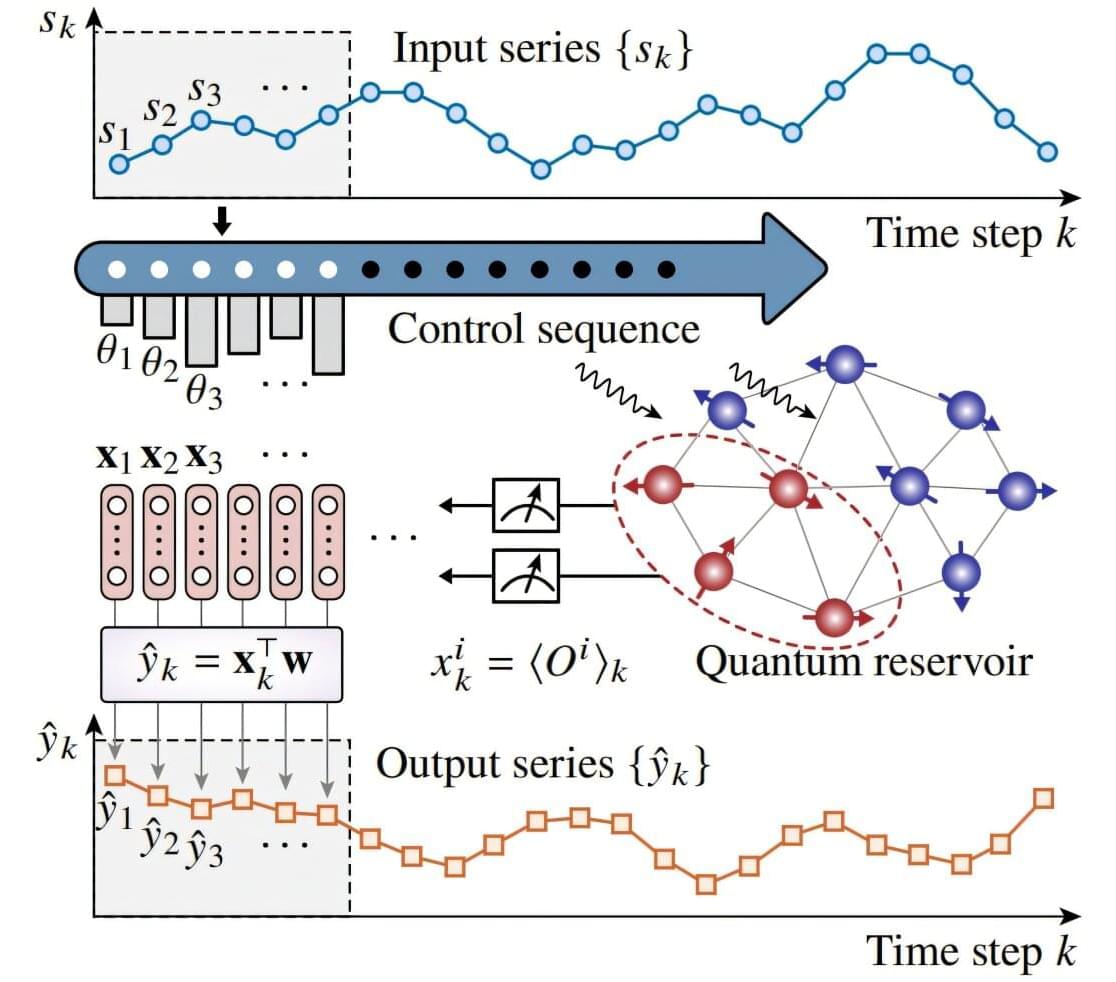

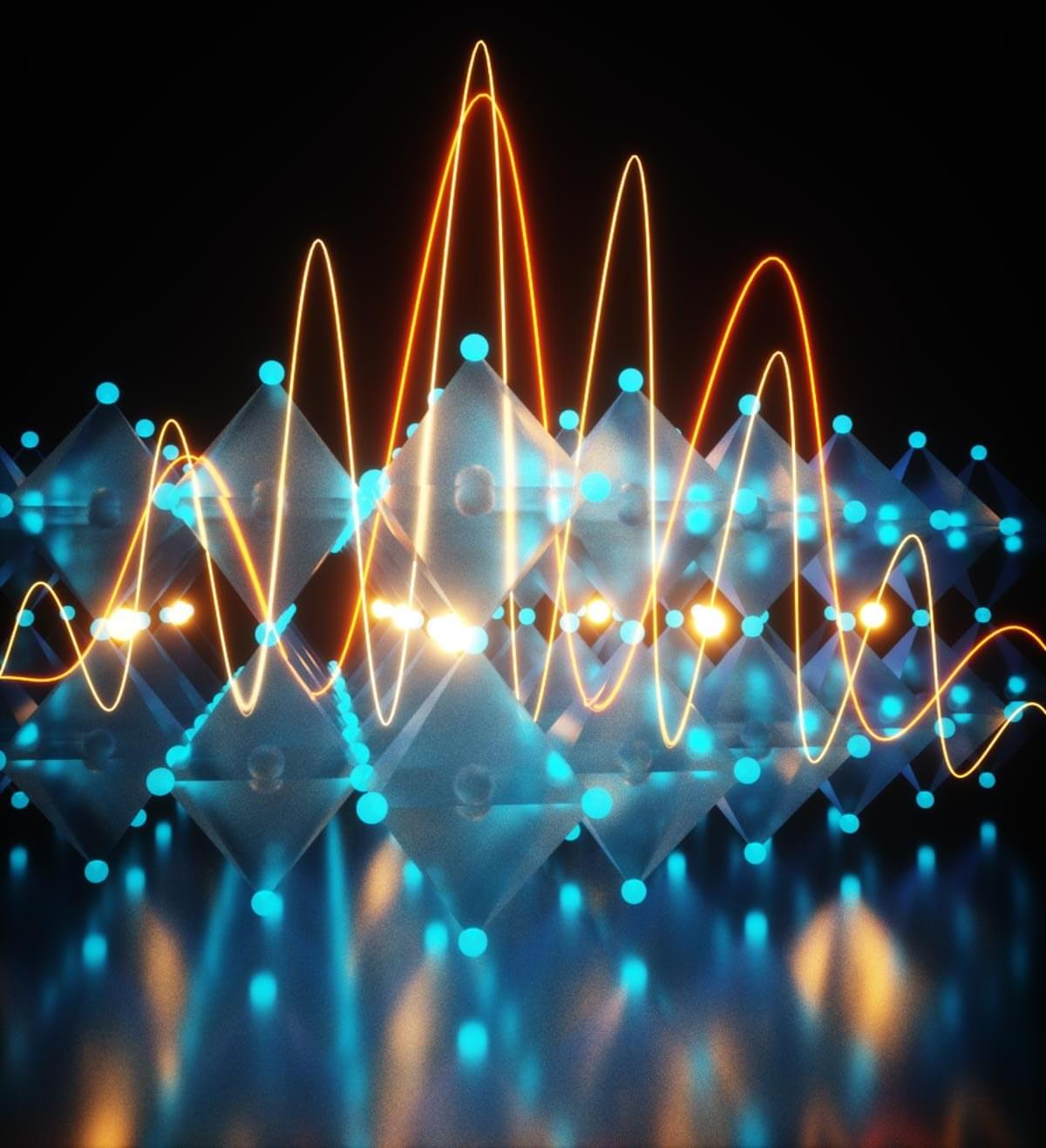

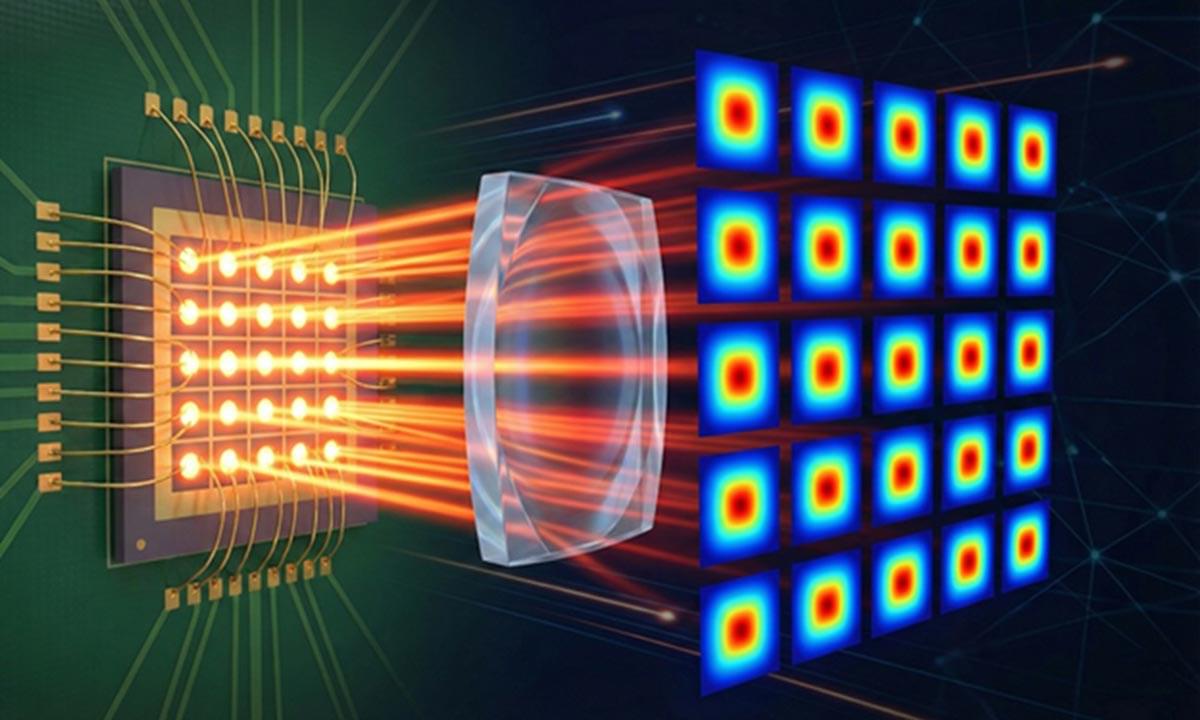

Can a handful of atoms outperform a much larger digital neural network on a real-world task? The answer may be yes. In a study published in Physical Review Letters, a team led by Prof. Peng Xinhua and Assoc. Prof. Li Zhaokai from the University of Science and Technology of China of the Chinese Academy of Sciences demonstrated that a quantum processor comprising just nine interacting spins outperforms classical networks with thousands of nodes in realistic weather forecasting tasks.

By exploiting unique quantum features such as superposition and entanglement, quantum devices offer new ways to represent and process information.

Recent experiments have shown their advantages in specialized benchmark tasks, but extending these gains to real-world applications remains a challenge. In particular, many quantum approaches rely on complex circuits that are difficult to implement accurately on today’s noisy hardware.