Colin Jacobs, PhD, assistant professor in the Department of Medical Imaging at Radboud University Medical Center in Nijmegen, The Netherlands, and Kiran Vaidhya Venkadesh, a second-year PhD candidate with the Diagnostic Image Analysis Group at Radboud University Medical Center discuss their 2021 Radiology study, which used CT images from the National Lung Cancer Screening Trial (NLST) to train a deep learning algorithm to estimate the malignancy risk of lung nodules.

Get the latest international news and world events from around the world.

There’s a Strange Link Between Depression And Body Temperature, Study Finds

To better treat and prevent depression, we need to understand more about the brains and bodies in which it occurs.

Curiously, a handful of studies have identified links between depressive symptoms and body temperature, yet their small sample sizes have left too much room for doubt.

In a more recent study published in February, researchers led by a team from the University of California San Francisco (UCSF) analyzed data from 20,880 individuals collected over seven months, confirming that those with depression tend to have higher body temperatures.

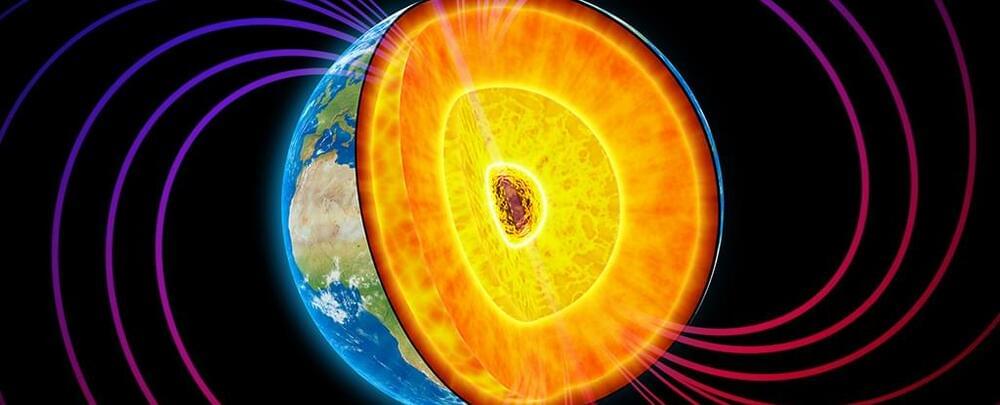

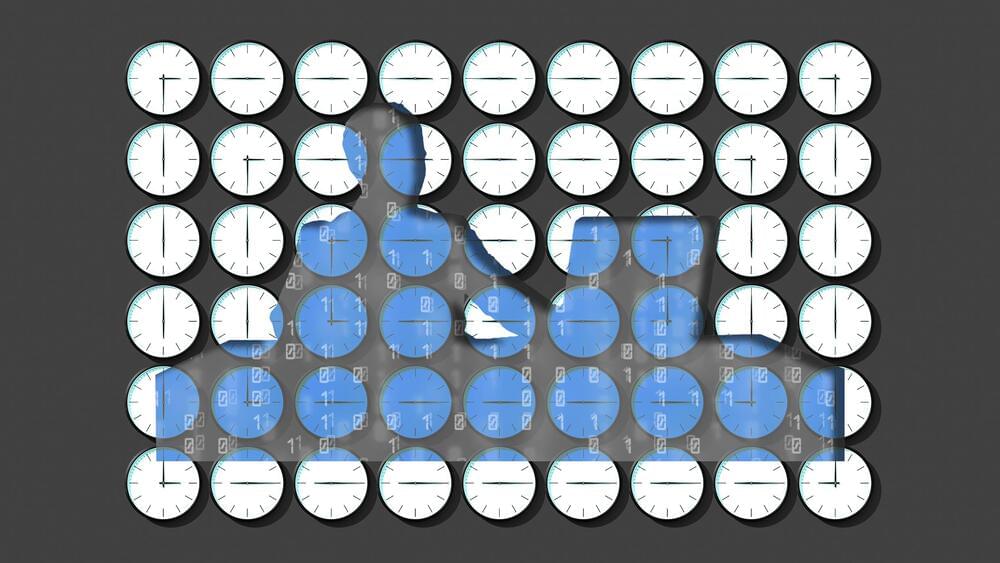

It’s Official: The Rotation of Earth’s Inner Core Really Is Slowing Down

The rotation of Earth’s inner core really has slowed down, a new study has confirmed, opening up questions about what’s happening in the center of the planet and how we might be affected.

Led by a team from the University of Southern California (USC), the researchers behind the finding think this change in the core’s rotation could change the length of our days – albeit only by a few fractions of a second, so you won’t need to reset your watches just yet.

“When I first saw the seismograms that hinted at this change, I was stumped,” says Earth scientist John Vidale from USC. “But when we found two dozen more observations signaling the same pattern, the result was inescapable.

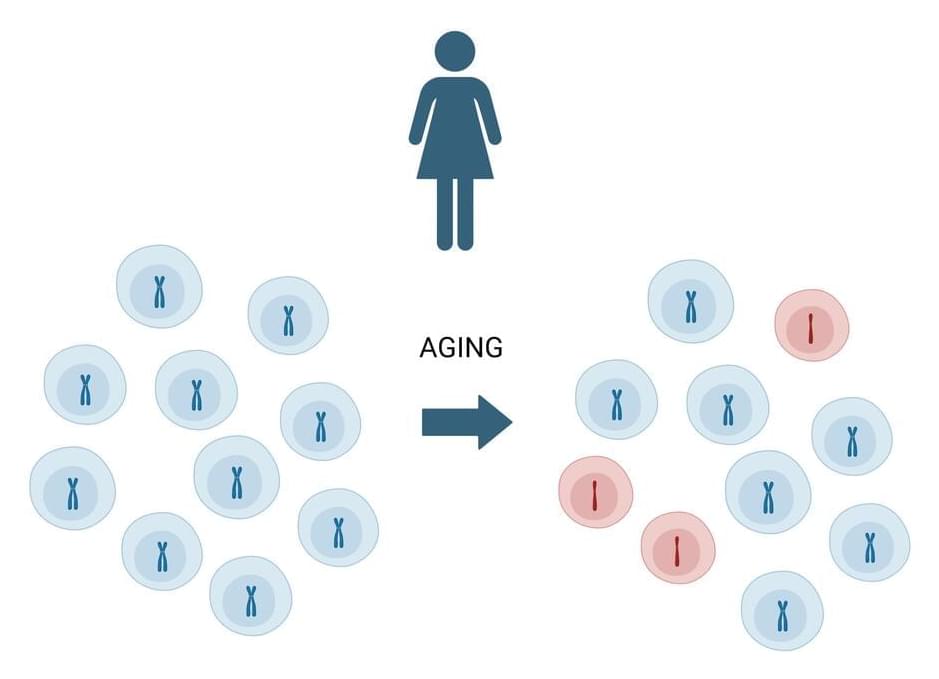

New DNA sequencing technique detects early genetic mutations

HiDEF-seq advances cancer treatment:

HiDEF-seq technique could further help develop or advance new prevention approaches or develop treatments for genetic diseases and even cancer.

Gilad Evrony, senior study author and a core member of the Center for Human Genetics & Genomics at NYU Grossman School of Medicine told Science Direct:

“Our new HiDEF-seq sequencing technique allows us to see the earliest fingerprints of molecular changes in DNA when the changes are only in single strands of DNA.”

Unlocking the Future of Quantum Computing: Insights from Paul Terry, CEO of Photonic Inc.

Paul Terry, CEO of Photonic Inc., explores the crucial phases needed to develop large-scale, fault-tolerant quantum systems.

What Salesforce learned after saving 50,000 hours of work using AI

Salesforce recently announced that it has introduced more than 50 AI-powered tools among its workforce and reported that these tools have collectively saved all of its employees in excess of 50,000 hours—or 24 years’ worth—of working time in just three months.

As a company, Salesforce serves as an especially compelling case study for the impact of AI on work—not only because the company tests tools on their own workforce, but because so many others rely on Salesforce’s products to do their jobs each day. Simply put: Salesforce is in the business of work.

Salesforce has more than 70,000 employees worldwide—a 30% increase since 2020. And the software giant builds the products that are used by employees at some 150,000 workplaces, from small businesses to Fortune 500 companies; from sales and customer service teams to marketing and tech teams.

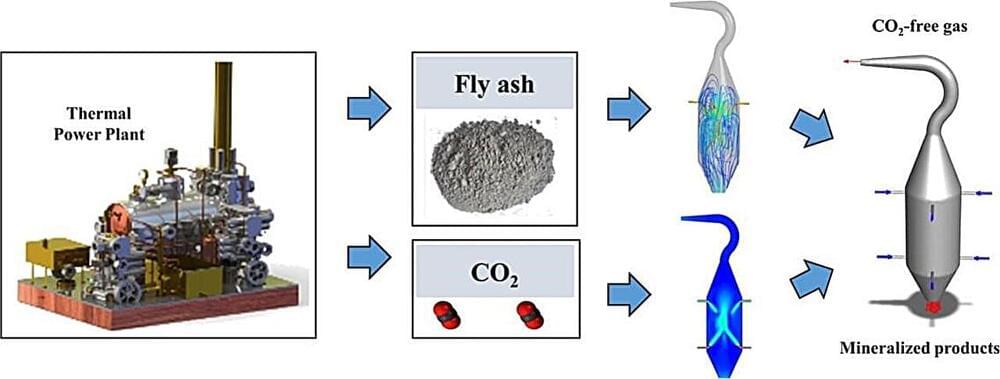

Mineralizing emissions: Advanced reactor designs for CO₂ capture

In advancing sustainable waste management and CO2 sequestration, researchers have crafted reactors that mineralize carbon dioxide with fly ash particles. This avant-garde technique is set to offer a sustainable and lasting solution to the pressing issue of greenhouse gas emissions, repurposing an industrial by-product in the process.