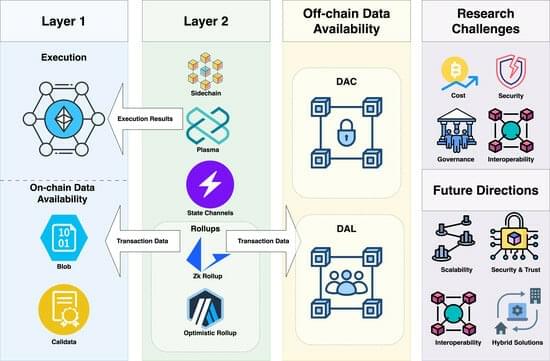

Layer 2 solutions have emerged in recent years as a valuable alternative to increase the throughput and scalability of blockchain-based architectures.

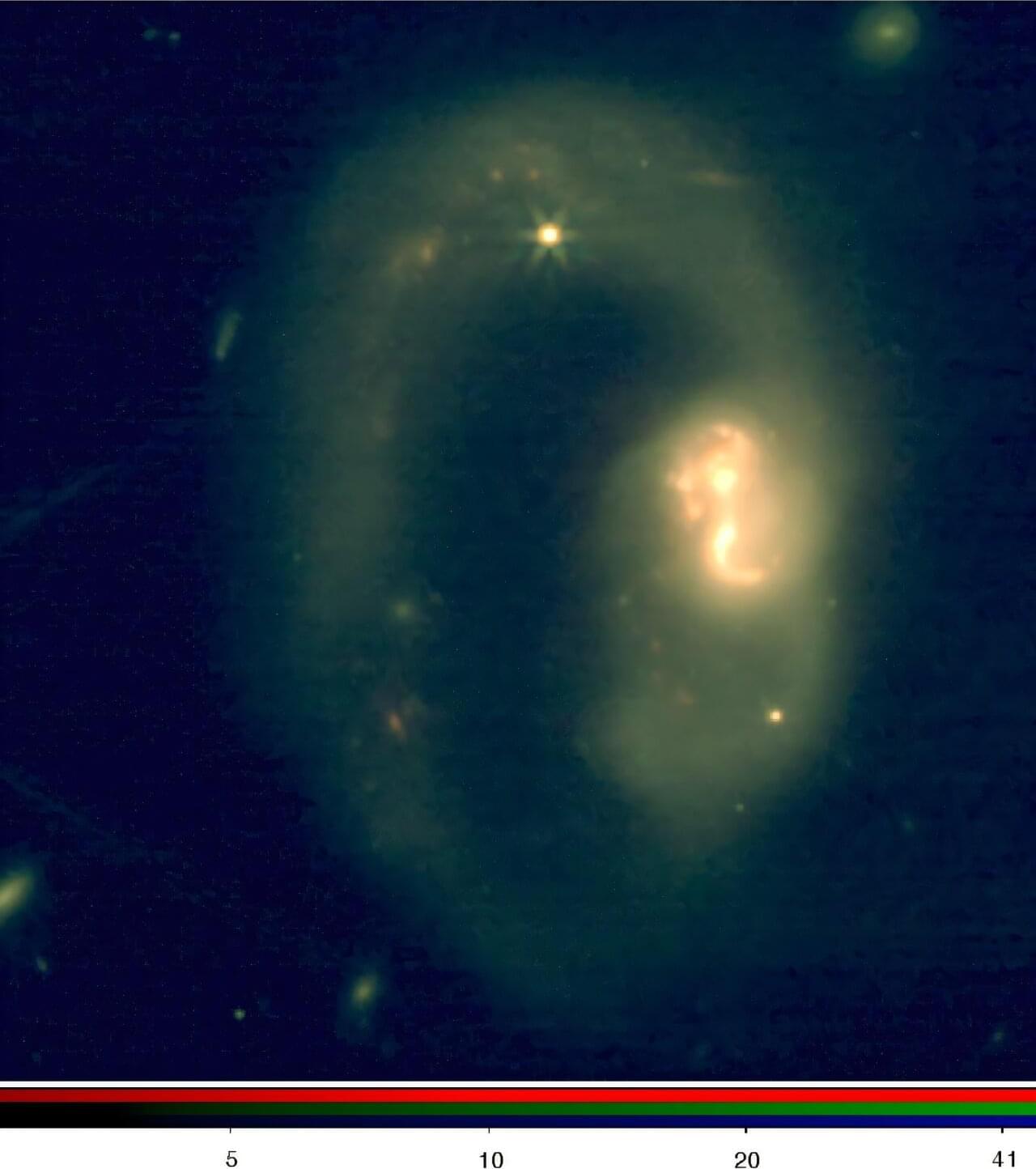

A study led by the Center for Astrobiology (CAB), CSIC-INTA, using modeling techniques developed at the University of Oxford, has uncovered an unprecedented richness of small organic molecules in the deeply obscured nucleus of a nearby galaxy, thanks to observations made with the James Webb Space Telescope (JWST).

The work, published in Nature Astronomy, provides new insights into how complex organic molecules and carbon are processed in some of the most extreme environments in the universe.

The study focuses on IRAS 07251–0248, an ultra-luminous infrared galaxy whose nucleus is hidden behind vast amounts of gas and dust. This material absorbs most of the radiation emitted by the central supermassive black hole, making it extremely difficult to study with conventional telescopes.

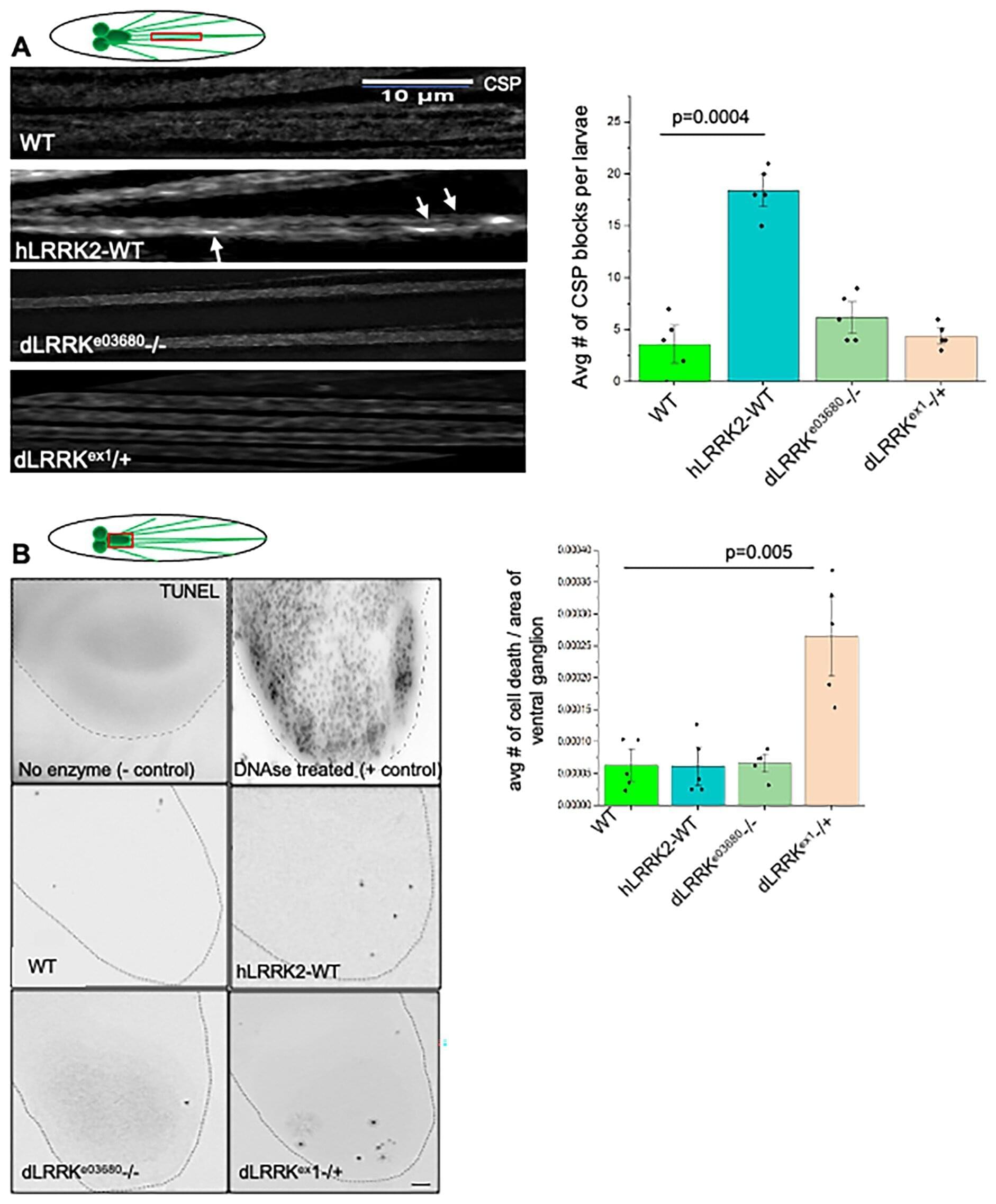

A hallmark of Parkinson’s disease is the buildup of Lewy bodies—misfolded clumps of the protein known as alpha-synuclein. Long before Lewy bodies form, alpha-synuclein can interfere with neurons’ ability to transport proteins and other cargo along their axons to the synapses. When present at high levels, alpha-synuclein binds too tightly to structures inside the axon, creating the cellular equivalent of traffic jams. These disruptions may even help set the stage for the later accumulation of Lewy bodies in the brain.

Now, University at Buffalo researchers have identified a way to reduce these traffic jams and restore flow—by altering how alpha-synuclein interacts with another Parkinson’s-related protein known as leucine-rich repeat kinase 2 (LRRK2).

In a study published last month, the researchers increased levels of specific mutant forms of LRRK2 in fruit fly larvae. They found that one mutation had a downstream effect on alpha-synuclein, limiting its ability to bind to cargo and disrupt axonal transport. The research is published in the journal Frontiers in Molecular Neuroscience.

A pioneering study marks a major step toward eliminating the need for daily insulin injections for people with diabetes. The study was led by Assistant Professor Shady Farah of the Faculty of Chemical Engineering at the Technion—Israel Institute of Technology, in co-correspondence with MIT, and in collaboration with Harvard University, Johns Hopkins University, and the University of Massachusetts. The findings are published in the journal Science Translational Medicine.

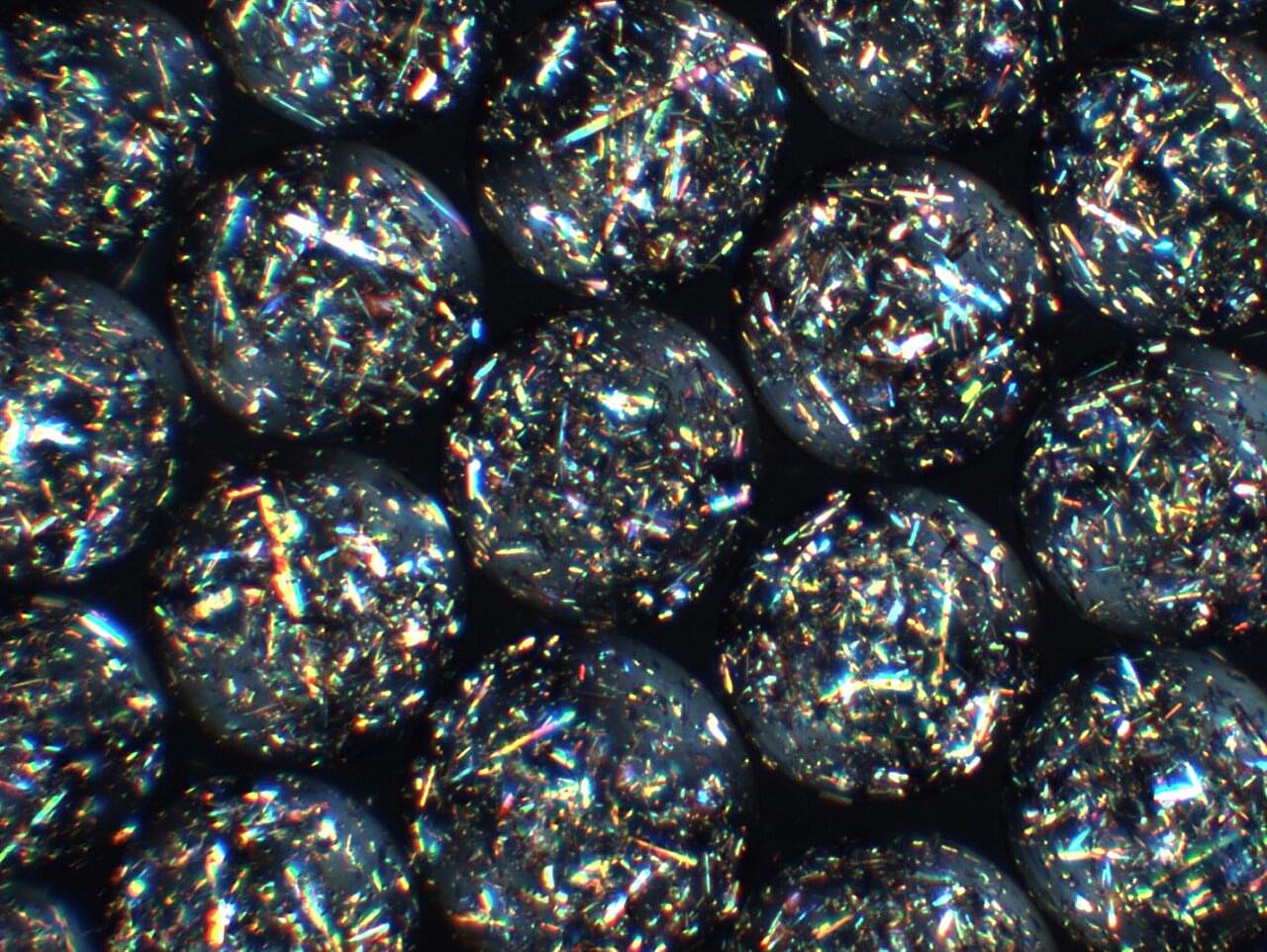

The research introduces a living, cell-based implant that can function as an autonomous artificial pancreas, essentially a living drug that is long-term, thanks to a novel crystalline shield-protecting technology. Once implanted, the system operates entirely on its own: it continuously senses blood-glucose levels, produces insulin within the implant itself, and releases the exact amount needed—precisely when it is needed. In effect, the implant becomes a self-regulating, drug-manufacturing organ inside the body, requiring no external pumps, injections, or patient intervention.

One of the study’s most significant breakthroughs addresses the longstanding challenge of immune rejection, which has limited the success of cell-based therapies for decades. The researchers developed engineered therapeutic crystals—called “crystalline shield”—that shield the implant from the immune system, preventing it from being recognized as a foreign object. This protective strategy enables the implant to function reliably and continuously for several years.

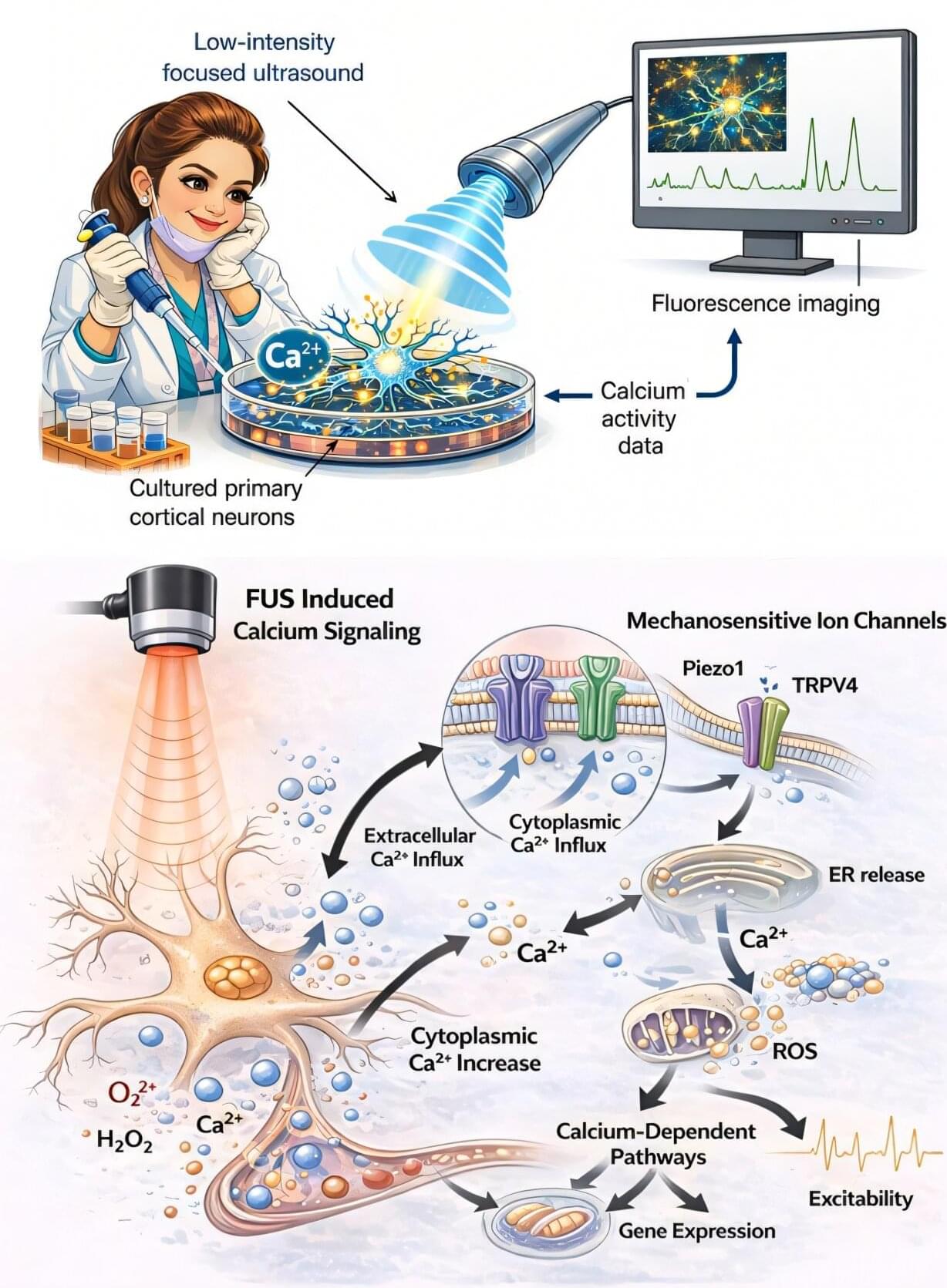

I still vividly remember the first time we observed neurons responding not to audible sound, but to concentrated, precisely calibrated ultrasonic pulses. On the screen in front of us, calcium signals from brain cells began to rise and fall in little waves. It was less about forcing the brain to adapt and more about listening to the brain and responding subtly.

Understanding how neurons interact and how neurological conditions like Parkinson’s disease affect this communication has been the focus of my study for many years. Calcium, a small ion that functions as a potent messenger inside cells, is at the center of this communication.

Neurons struggle to survive, connect, and operate correctly when calcium transmission is disrupted. Our team began to wonder if we might safely modify this fundamental signaling function without requiring invasive operations or drugs.

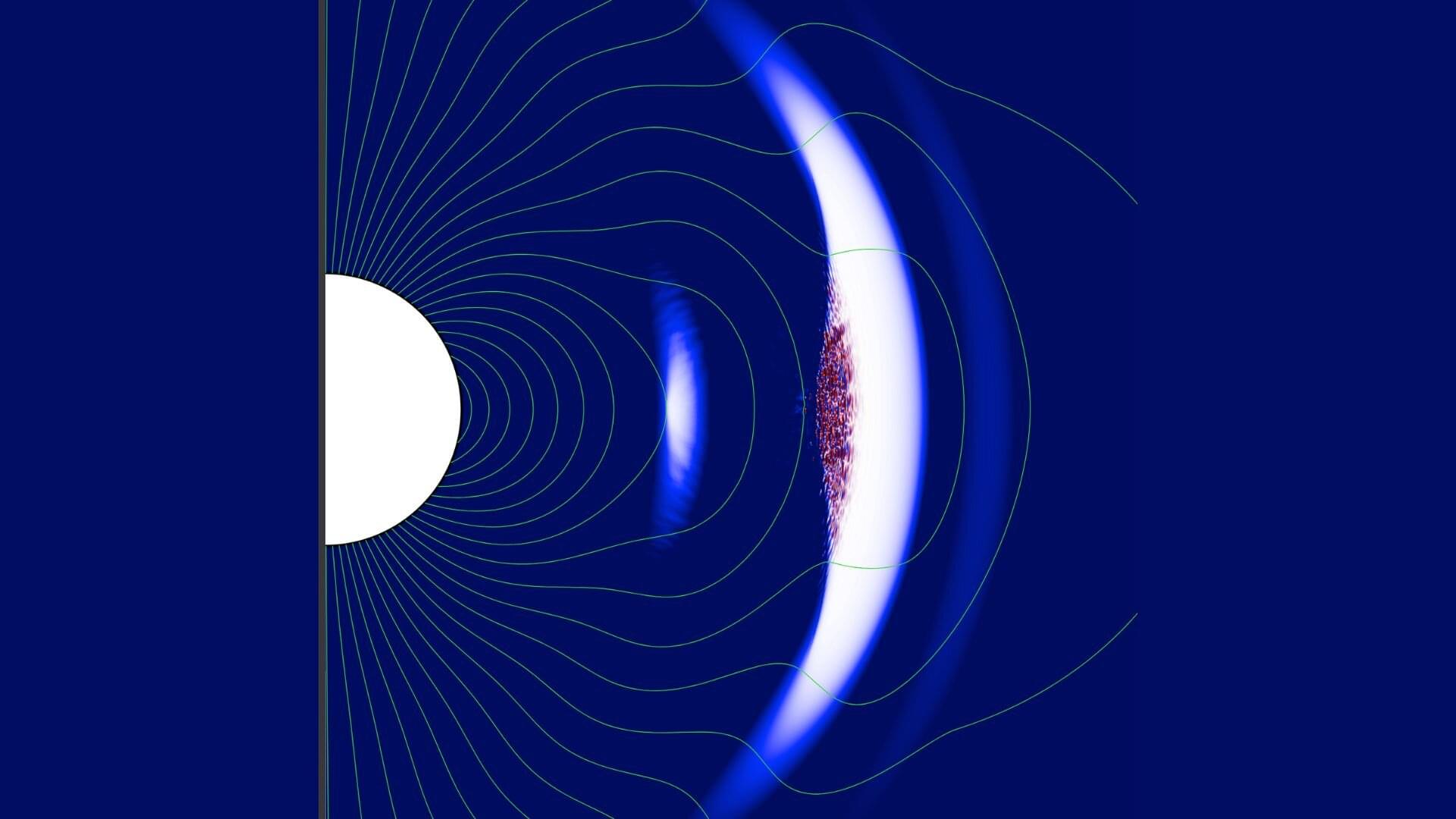

In a new study published in Physical Review Letters, scientists have performed the first global simulations of monster shocks—some of the strongest shocks in the universe—revealing how these extreme events in magnetar magnetospheres could be responsible for producing fast radio bursts (FRBs).

Magnetars are young neutron stars with extremely strong magnetic fields, reaching up to 1015 Gauss on their surfaces. These cosmic powerhouses produce prolific X-ray activity and have emerged as candidates for explaining FRBs, mysterious millisecond-duration radio bursts detected from across the cosmos. The connection between magnetars and FRBs was strengthened in 2020 when a simultaneous X-ray and radio burst was observed from the galactic magnetar SGR 1935+2154.

The study explores monster shock formation in realistic magnetospheric geometry and was led by Dominic Bernardi, a graduate student at Washington University in St. Louis.

Researchers in Australia have unveiled the largest quantum simulation platform built to date, opening a new route to exploring the complex behavior of quantum materials at unprecedented scales.

Reporting in Nature, a team led by Michelle Simmons at the University of New South Wales (UNSW) Sydney has demonstrated a platform they call “Quantum Twins”: a two-dimensional array of around 15,000 individually controllable quantum dots. The researchers say the system could soon be used to simulate a wide range of exotic quantum effects that emerge in large, strongly correlated materials.

As quantum technologies advance, it is becoming increasingly important to understand how advanced quantum materials behave under different conditions.

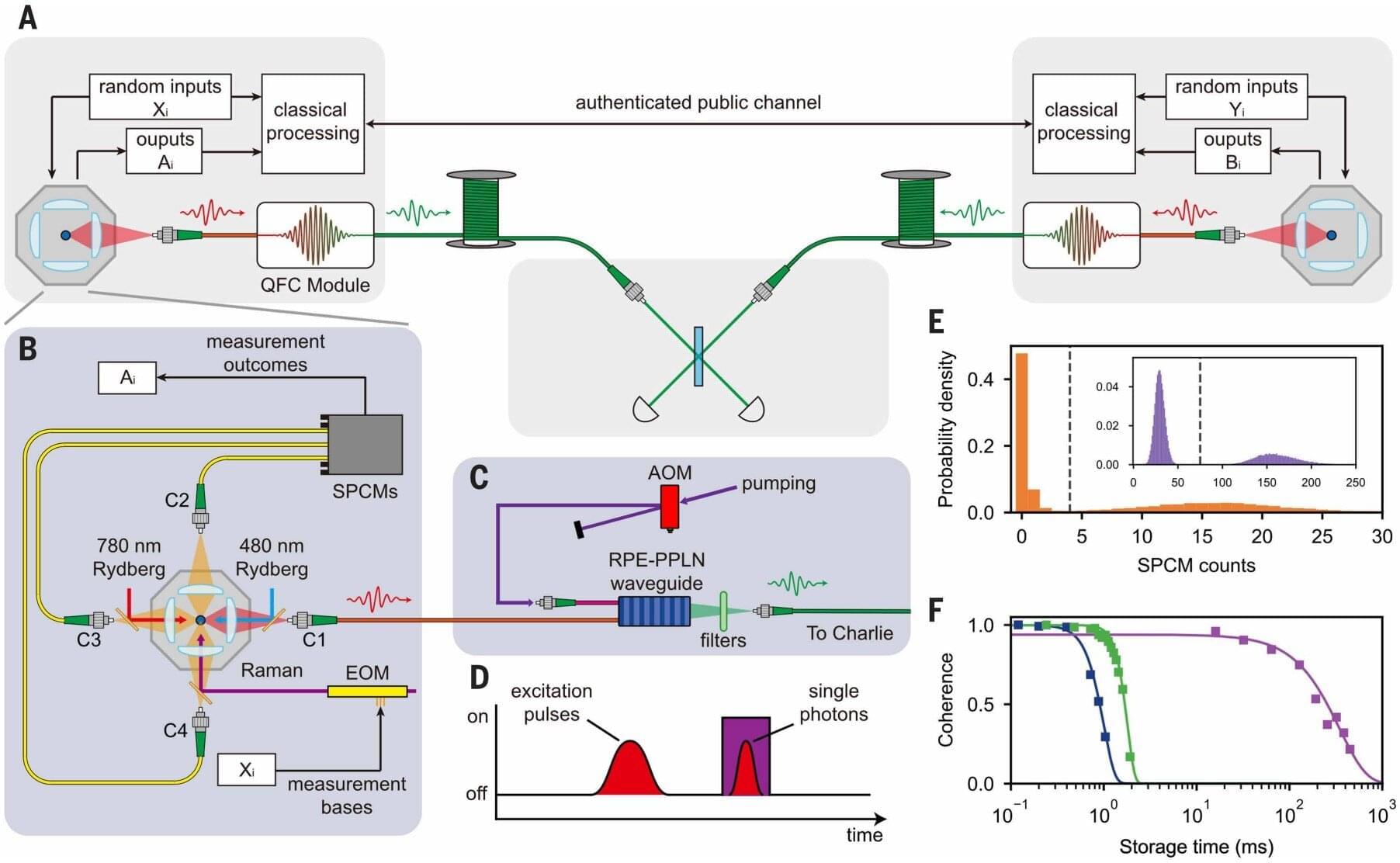

Concerns that quantum computers may start easily hacking into previously secure communications has motivated researchers to work on innovative new ways to encrypt information. One such method is quantum key distribution (QKD), a secure, quantum-based method in which eavesdropping attempts disrupt the quantum state, making unauthorized interception immediately detectable.

Previous attempts at this solution were limited by short distances and reliance on special devices, but a research team in China recently demonstrated the ability to maintain quantum encryption over longer distances. The research, published in Science, describes device-independent QKD (DI-QKD) between two single-atom nodes over up to 100 km of optical fiber.

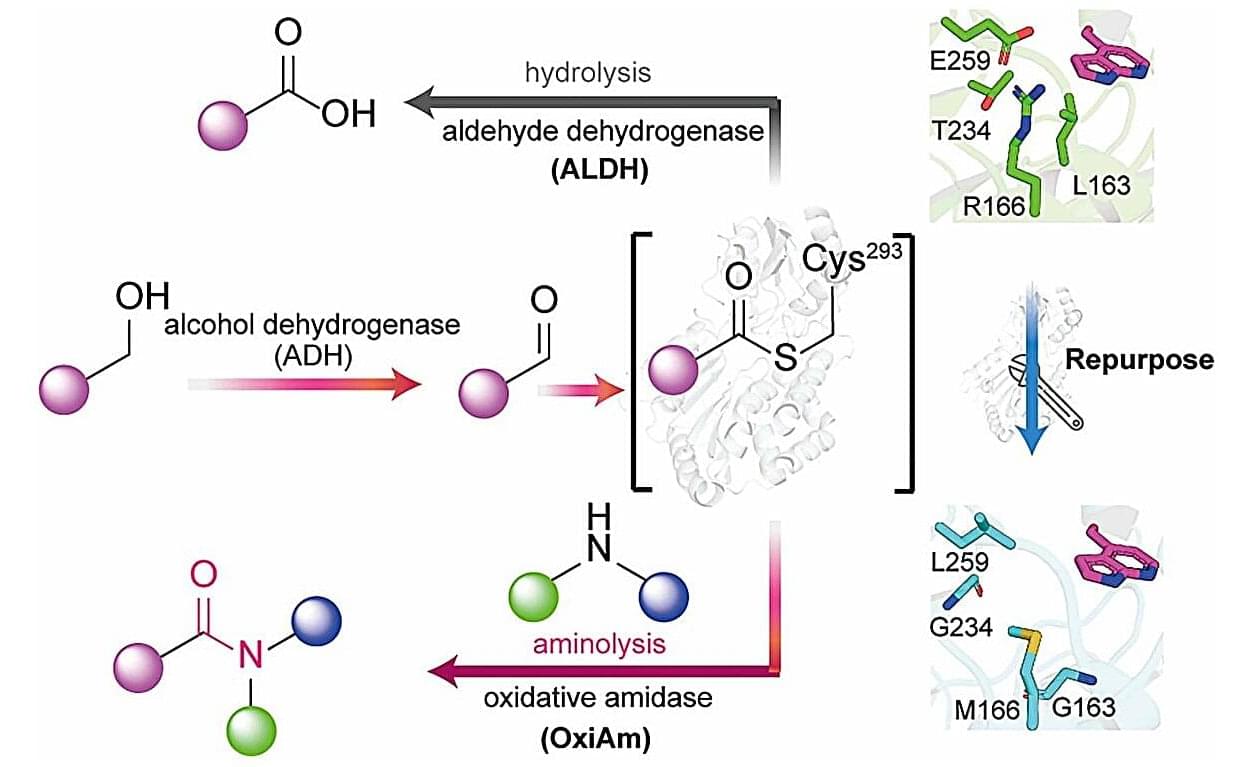

A single type of chemical structure that shows up again and again in modern medicine is the amide bond that links a carbonyl group (C=O) to a nitrogen atom. They’re so ubiquitous that 117 of the top 200 small-molecule drugs by retail sales in 2023 feature at least one amide bond. And now, researchers have discovered a clever new way to reengineer natural enzymes to build amides from simple chemicals like aldehydes and amines.

The team chose a naturally abundant enzyme family called aldehyde dehydrogenases (ALDHs), specifically p-hydroxybenzaldehyde dehydrogenase (PHBDD), which can efficiently convert aldehydes into acids. The team turned it into a new catalyst, known as an oxidative amidase (OxiAm), by modifying its internal pocket of the enzyme in two major ways: making it hydrophobic to prevent the formation of unwanted acids and making it bigger to allow larger, diverse chemical parts to fit inside so they could be bonded together.

According to the results published in Science, the team was able to obtain amides directly from commercially available alcohols via a two-step enzymatic cascade reaction carried out in a single container. This approach could enable new, greener methods for producing five major drug molecules, including a key component of imatinib, an essential drug used to treat chronic myeloid leukemia and gastrointestinal stromal tumors.

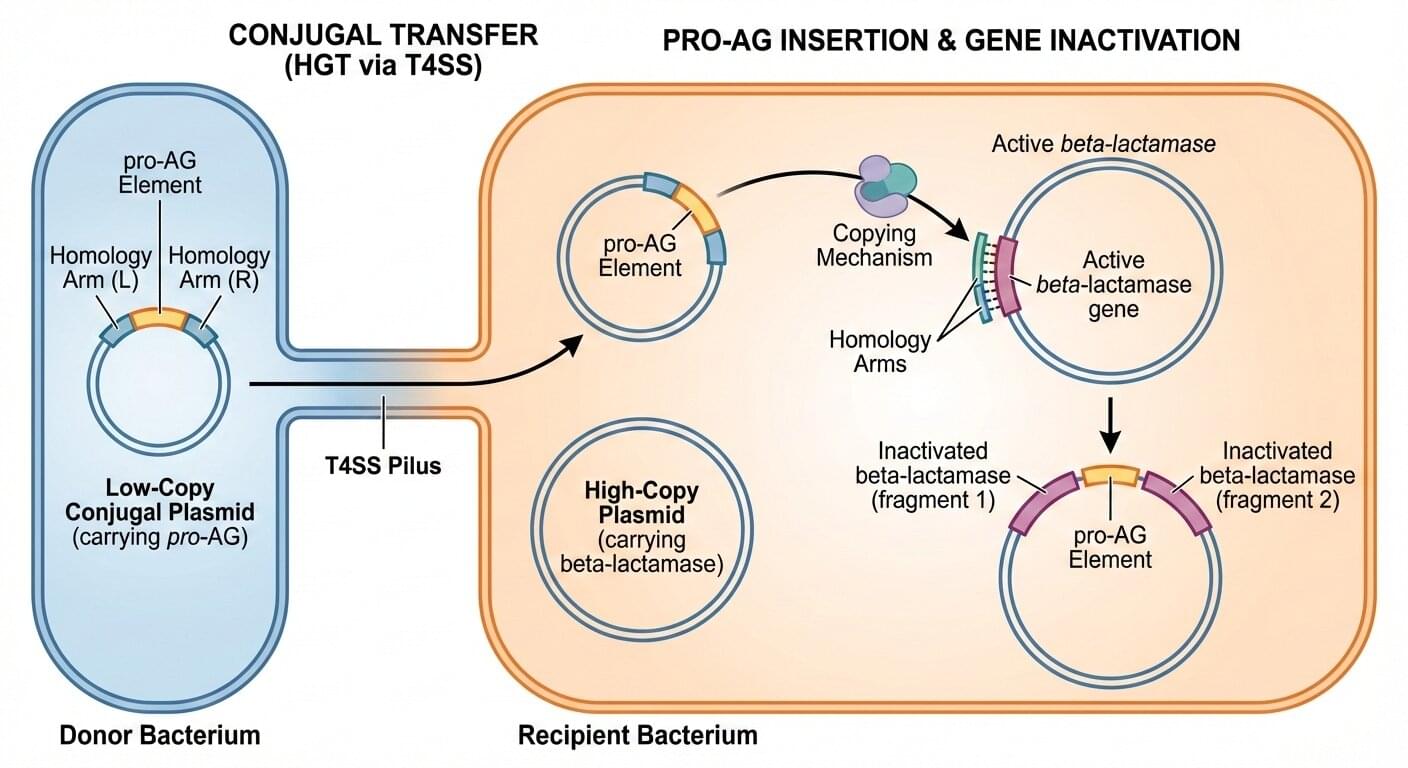

Antibiotic resistance (AR) has steadily accelerated in recent years to become a global health crisis. As deadly bacteria evolve new ways to elude drug treatments for a variety of illnesses, a growing number of “superbugs” have emerged, ramping up estimates of more than 10 million worldwide deaths per year by 2050.

Scientists are looking to recently developed technologies to address the pressing threat of antibiotic-resistant bacteria, which are known to flourish in hospital settings, sewage treatment areas, animal husbandry locations, and fish farms. University of California San Diego scientists have now applied cutting-edge genetics tools to counteract antibiotic resistance.

The laboratories of UC San Diego School of Biological Sciences Professors Ethan Bier and Justin Meyer have collaborated on a novel method of removing antibiotic-resistant elements from populations of bacteria. The researchers developed a new CRISPR-based technology similar to gene drives, which are being applied in insect populations to disrupt the spread of harmful properties, such as parasites that cause malaria. The new Pro-Active Genetics (Pro-AG) tool called pPro-MobV is a second-generation technology that uses a similar approach to disable drug resistance in populations of bacteria.