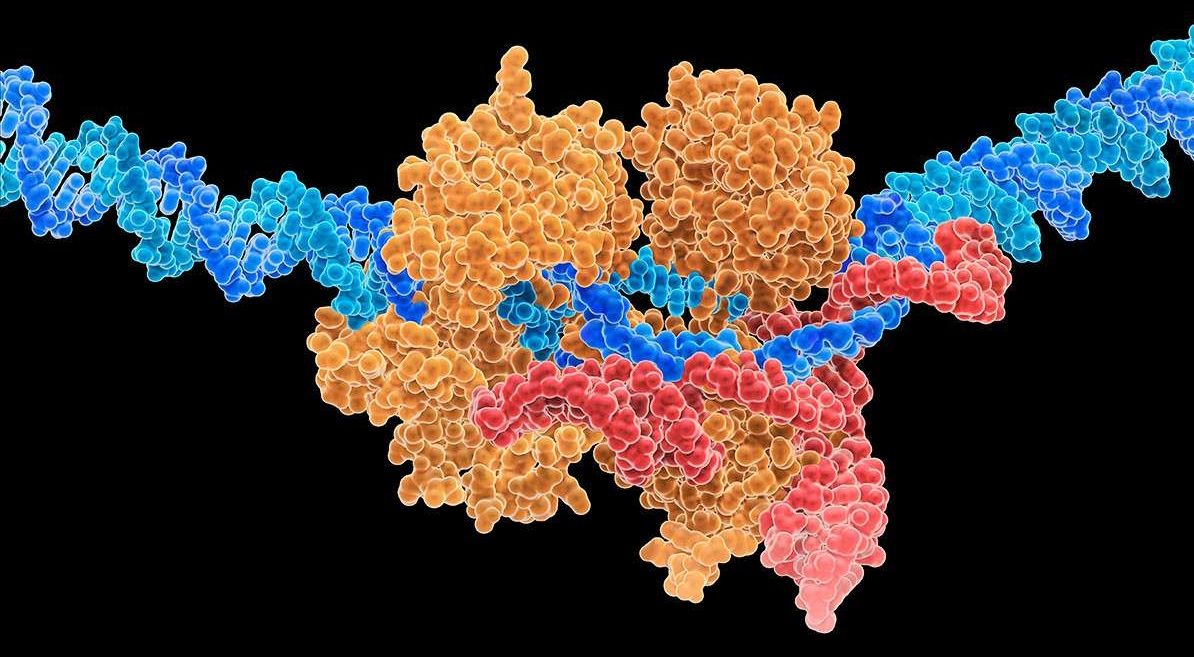

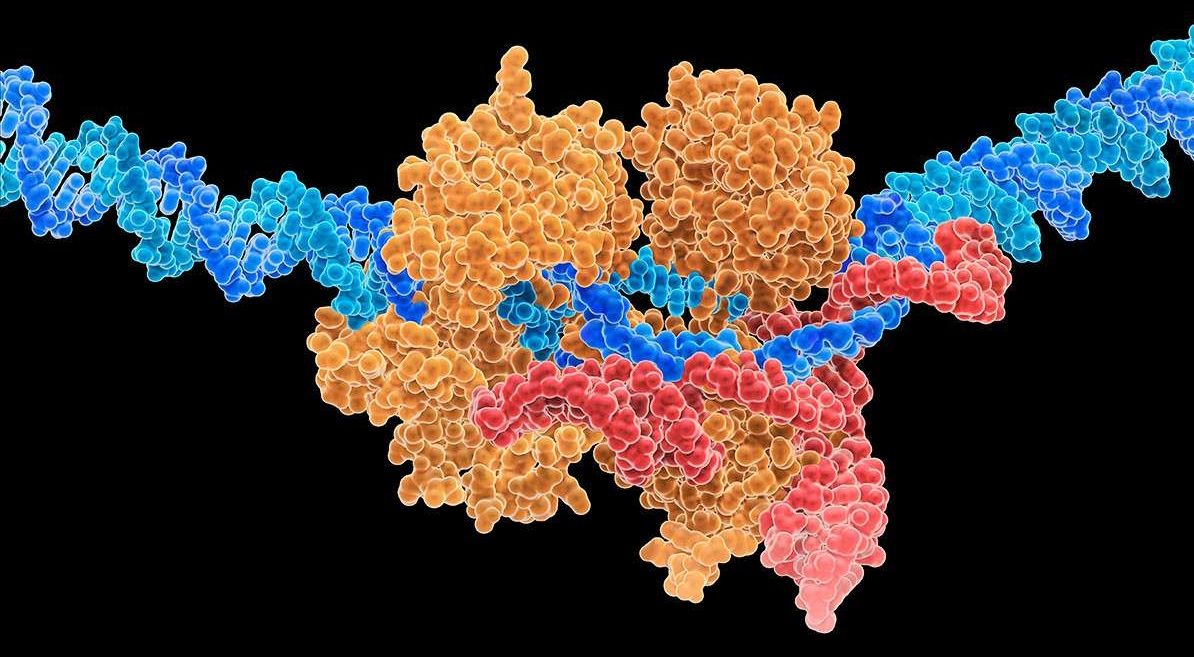

He Jiankui has now presented his controversial work at a gene editing summit in Hong Kong. CRISPR expert Helen O’Neill of University College London was there.

This animation by Rosanna Wan for the Royal Institution tells the fascinating story of Marie Tharp’s groundbreaking work to help prove Wegener’s theory.

The Short Film Showcase spotlights exceptional short videos created by filmmakers from around the web and selected by National Geographic editors. We look for work that affirms National Geographic’s belief in the power of science, exploration, and storytelling to change the world. The filmmakers created the content presented, and the opinions expressed are their own, not those of National Geographic Partners.

Know of a great short film that should be part of our Showcase? Email [email protected] to submit a video for consideration. See more from National Geographic’s Short Film Showcase at documentary.com

This wearable strap for your fingers allows you to text on your phone wirelessly 🖐️.

Yang N, Sen P,. The senescent cell epigenome. Aging (Albany NY). 2018; 10:3590–3609. https://doi.org/10.18632/aging.

Copy or Download citation:

Select the format you require from the list below.

What would it take to build a new, artificial planet?

It’s not like moon-walking astronauts don’t already have plenty of hazards to deal with. There’s less gravity, extreme temperatures, radiation—and the whole place is aggressively dusty. If that weren’t enough, it also turns out that the visual-sensory cues we use to perceive depth and distance don’t work as expected—on the moon, human eyeballs can turn into scam artists.

During the Apollo missions, it was a well-documented phenomenon that astronauts routinely underestimated the size of craters, the slopes of hilltops, and the distance to certain objects. Objects appeared much closer than they were, which created headaches for mission control. Astronauts sometimes overexerted themselves and depleted oxygen supplies in trying to reach objects that were further than expected.

This phenomenon has also become a topic of study for researchers trying to explain why human vision functions differently in space, why so many visual errors occurred, and what, if anything, we can do to prepare the next generation of space travelers.

Ketamine, a drug once associated with raucous parties, bright lights, and loud music, is increasingly being embraced as an alternative depression treatment for the millions of patients who don’t get better after trying traditional medications.

The latest provider of the treatment is Columbia University, one of the nation’s largest academic medical centers.