Researchers pitted the biggest computer chip in the world against a supercomputer to simulate combustion—and the megachip won the race by a mile.

Special thanks to Lieuwe Vinkhuyzen for checking that this very simplified view on building neural nets did not stray too far from reality.

The inhabitants of the Tesla fanboy echo chamber have heard regularly about the Tesla Dojo supercomputer, with almost nobody knowing what it was. It was first mentioned, that I know of, at Tesla Autonomy Day on April 22, 2019. More recently a few comments from Georg Holtz, Tesmanian, and Elon Musk himself have shed some light on this project.

Aurora 21 will help the US keep pace among the other nations who own the fastest supercomputers. Scientists plan on using it to map the connectome of the human brain.

Harun Šiljak, Trinity College Dublin

Google reported a remarkable breakthrough towards the end of 2019. The company claimed to have achieved something called quantum supremacy, using a new type of “quantum” computer to perform a benchmark test in 200 seconds. This was in stark contrast to the 10,000 years that would supposedly have been needed by a state-of-the-art conventional supercomputer to complete the same test.

Despite IBM’s claim that its supercomputer, with a little optimisation, could solve the task in a matter of days, Google’s announcement made it clear that we are entering a new era of incredible computational power.

Another argument for government to bring AI into its quantum computing program is the fact that the United States is a world leader in the development of computer intelligence. Congress is close to passing the AI in Government Act, which would encourage all federal agencies to identify areas where artificial intelligences could be deployed. And government partners like Google are making some amazing strides in AI, even creating a computer intelligence that can easily pass a Turing test over the phone by seeming like a normal human, no matter who it’s talking with. It would probably be relatively easy for Google to merge some of its AI development with its quantum efforts.

The other aspect that makes merging quantum computing with AI so interesting is that the AI could probably help to reduce some of the so-called noise of the quantum results. I’ve always said that the way forward for quantum computing right now is by pairing a quantum machine with a traditional supercomputer. The quantum computer could return results like it always does, with the correct outcome muddled in with a lot of wrong answers, and then humans would program a traditional supercomputer to help eliminate the erroneous results. The problem with that approach is that it’s fairly labor intensive, and you still have the bottleneck of having to run results through a normal computing infrastructure. It would be a lot faster than giving the entire problem to the supercomputer because you are only fact-checking a limited number of results paired down by the quantum machine, but it would still have to work on each of them one at a time.

But imagine if we could simply train an AI to look at the data coming from the quantum machine, figure out what makes sense and what is probably wrong without human intervention. If that AI were driven by a quantum computer too, the results could be returned without any hardware-based delays. And if we also employed machine learning, then the AI could get better over time. The more problems being fed to it, the more accurate it would get.

Quantum computers can solve problems in seconds that would take “ordinary” computers millennia, but their sensitivity to interference is majorly holding them back. Now, researchers claim they’ve created a component that drastically cuts down on error-inducing noise.

» Subscribe to Seeker! http://bit.ly/subscribeseeker

» Watch more Elements! http://bit.ly/ElementsPlaylist

» Visit our shop at http://shop.seeker.com

Quantum computers use quantum bits, or qubits, which can represent a one, a zero, or any combination of the two simultaneously. This is thanks to the quantum phenomenon known as superposition.

Another property, quantum entanglement, allows for qubits to be linked together, and changing the state of one qubit will also change the state of its entangled partner.

Thanks to these two properties, quantum computers of a few dozen qubits can outperform massive supercomputers in certain very specific tasks. But there are several issues holding quantum computers back from solving the world’s toughest problems, one of them is how prone qubits are to error.

#computers #quantumcomputers #science #seeker #elements

New detector breakthrough pushes boundaries of quantum computing

Circa 2018

After 12 years of work, researchers at the University of Manchester in England have completed construction of a “SpiNNaker” (Spiking Neural Network Architecture) supercomputer. It can simulate the internal workings of up to a billion neurons through a whopping one million processing units.

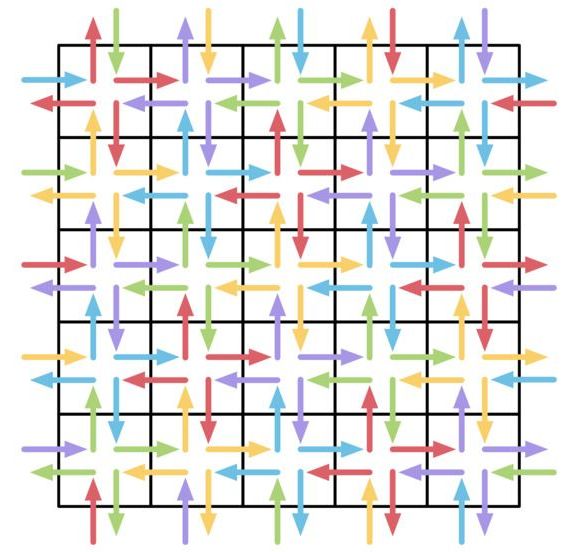

The human brain contains approximately 100 billion neurons, exchanging signals through hundreds of trillions of synapses. While these numbers are imposing, a digital brain simulation needs far more than raw processing power: rather, what’s needed is a radical rethinking of the standard computer architecture on which most computers are built.

“Neurons in the brain typically have several thousand inputs; some up to quarter of a million,” Prof. Stephen Furber, who conceived and led the SpiNNaker project, told us. “So the issue is communication, not computation. High-performance computers are good at sending large chunks of data from one place to another very fast, but what neural modeling requires is sending very small chunks of data (representing a single spike) from one place to many others, which is quite a different communication model.”