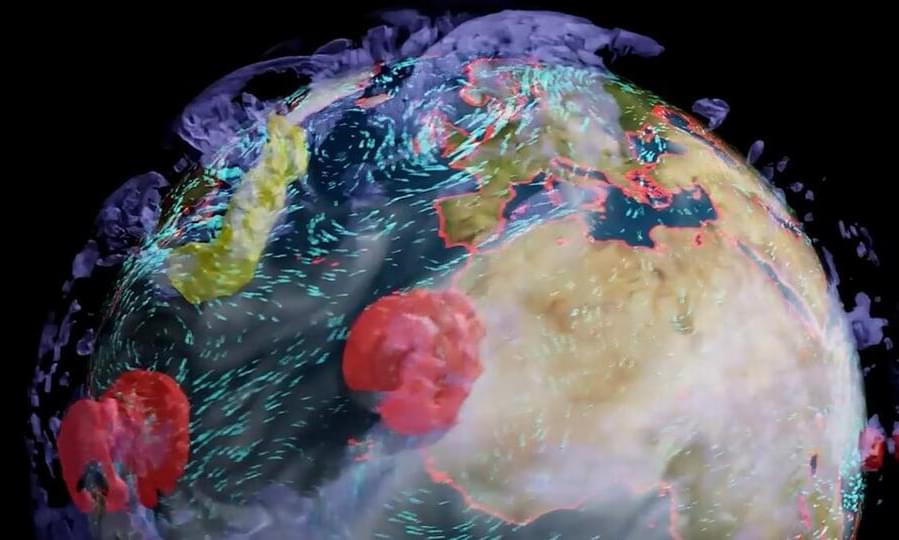

NVIDIA plans to build the world’s most powerful AI supercomputer dedicated to predicting climate change, named Earth-2.

The earth is warming. The past seven years are on track to be the seven warmest on record. The emissions of greenhouse gases from human activities are responsible for approximately 1.1°C of average warming since the period 1850–1900.

What we’re experiencing is very different from the global average. We experience extreme weather — historic droughts, unprecedented heatwaves, intense hurricanes, violent storms and catastrophic floods. Climate disasters are the new norm.

We need to confront climate change now. Yet, we won’t feel the impact of our efforts for decades. It’s hard to mobilize action for something so far in the future. But we must know our future today — see it and feel it — so we can act with urgency.