Category: robotics/AI – Page 1,861

Canada will get its first universal quantum computer from IBM

Quantum computing is still rare enough that merely installing a system in a country is a breakthrough, and IBM is taking advantage of that novelty. The company has forged a partnership with the Canadian province of Quebec to install what it says is Canada’s first universal quantum computer. The five-year deal will see IBM install a Quantum System One as part of a Quebec-IBM Discovery Accelerator project tackling scientific and commercial challenges.

The team-up will see IBM and the Quebec government foster microelectronics work, including progress in chip packaging thanks to an existing IBM facility in the province. The two also plan to show how quantum and classical computers can work together to address scientific challenges, and expect quantum-powered AI to help discover new medicines and materials.

IBM didn’t say exactly when it would install the quantum computer. However, it will be just the fifth Quantum One installation planned by 2023 following similar partnerships in Germany, Japan, South Korea and the US. Canada is joining a relatively exclusive club, then.

Mimicking the brain to realize ‘human-like’ virtual assistants

Speech is more than just a form of communication. A person’s voice conveys emotions and personality and is a unique trait we can recognize. Our use of speech as a primary means of communication is a key reason for the development of voice assistants in smart devices and technology. Typically, virtual assistants analyze speech and respond to queries by converting the received speech signals into a model they can understand and process to generate a valid response. However, they often have difficulty capturing and incorporating the complexities of human speech and end up sounding very unnatural.

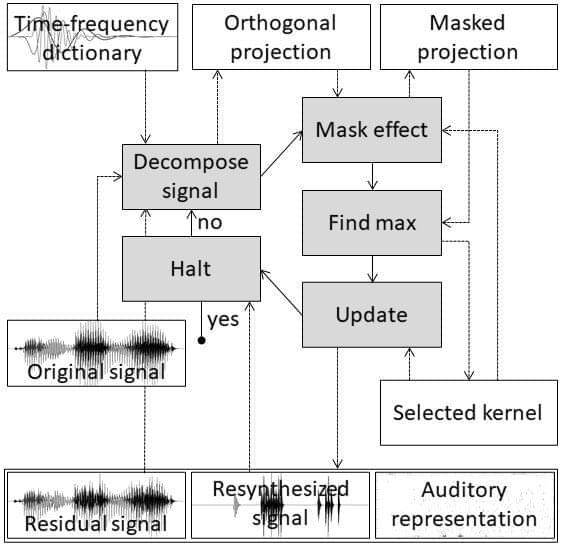

Now, in a study published in the journal IEEE Access, Professor Masashi Unoki from Japan Advanced Institute of Science and Technology (JAIST), and Dung Kim Tran, a doctoral course student at JAIST, have developed a system that can capture the information in speech signals similarly to how humans perceive speech.

“In humans, the auditory periphery converts the information contained in input speech signals into neural activity patterns (NAPs) that the brain can identify. To emulate this function, we used a matching pursuit algorithm to obtain sparse representations of speech signals, or signal representations with the minimum possible significant coefficients,” explains Prof. Unoki. “We then used psychoacoustic principles, such as the equivalent rectangular bandwidth scale, gammachirp function, and masking effects to ensure that the auditory sparse representations are similar to that of the NAPs.”

The brain’s secret to life-long learning can now come as hardware for artificial intelligence

When the human brain learns something new, it adapts. But when artificial intelligence learns something new, it tends to forget information it already learned.

As companies use more and more data to improve how AI recognizes images, learns languages and carries out other complex tasks, a paper publishing in Science this week shows a way that computer chips could dynamically rewire themselves to take in new data like the brain does, helping AI to keep learning over time.

“The brains of living beings can continuously learn throughout their lifespan. We have now created an artificial platform for machines to learn throughout their lifespan,” said Shriram Ramanathan, a professor in Purdue University’s School of Materials Engineering who specializes in discovering how materials could mimic the brain to improve computing.

Captained: The European immigrants prevailed in that war, as well as in a long series of conflicts with other tribes

On this land taken from Indigenous Peoples, a new nation was eventually born, largely built by those whose ancestries traced back to the Old World via immigration and slavery.

As the country grew, inventions like the telephone, airplane, and Internet helped usher in today’s interconnected world. But the inexorable march of technological progress has come at great cost to the health of the planet, particularly because of global dependence on fossil fuels. The United Nations declared in 2017 that a Decade of Ocean Science for Sustainable Development would be held from 2021 to 2030. This Ocean Decade calls for a worldwide effort to reverse the oceans’ degradation.

The dawn of this decade, 2020, also marked the 400th anniversary of the Mayflower’s journey. Plymouth 400, a cultural nonprofit, has been working for more than a decade to commemorate the anniversary in ways that honor all aspects of this history, said spokesperson Brian Logan. Events began in 2020, but one of the most innovative launches is still waiting in the wings—a newfangled nautical craft, the Mayflower Autonomous Ship, or MAS.

Does AI Improve Human Judgment?

Decision-making has mostly revolved around learning from mistakes and making gradual, steady improvements. For several centuries, evolutionary experience has served humans well when it comes to decision-making. So, it is safe to say that most decisions human beings make are based on trial and error. Additionally, humans rely heavily on data to make key decisions. Larger the amount of high-integrity data available, the more balanced and rational their decisions will be. However, in the age of big data analytics, businesses and governments around the world are reluctant to use basic human instinct and know-how to make major decisions. Statistically, a large percentage of companies globally use big data for the purpose. Therefore, the application of AI in decision-making is an idea that is being adopted more and more today than in the past.

However, there are several debatable aspects of using AI in decision-making. Firstly, are *all* the decisions made with inputs from AI algorithms correct? And does the involvement of AI in decision-making cause avoidable problems? Read on to find out: involvement of AI in decision-making simplifies the process of making strategies for businesses and governments around the world. However, AI has had its fair share of missteps on several occasions.

NASA’s X-48 Aircraft Test Flights Promise a ‘Green Airliner’ For the Future

Fewer CO2 emissions, more cargo space.

California-based startup Natilus revealed a new unmanned aircraft that it believes will make air cargo more sustainable as well as cost-effective, a report from *NewAtlas* reveals.

The company designed a blended wing body aircraft, similar to NASA’s X-48 “green airliner” concept, which it says allows it to offer “an estimated 60% more cargo volume than traditional aircraft of the same weight while reducing costs and carbon dioxide per pound by 50%.” startup Natilus’ new aircraft promise fewer CO2 emissions and more cargo space.

Metaverse: what is it and what possibilities does it offer?

The concept of the Metaverse first blew up in October of 2021 when the company formerly known as Facebook announced its rebranding to Meta with an intent to build the metaverse, a virtual world where users could interact with each other and even play games. Meta, at the time, was said to be hiring 10,000 engineers to build the tools of the Metaverse.

The news made headlines around the world and had people asking: what exactly is a Metaverse? In short, it is an extension of our world, complete with concert venues, museums, and even robot training grounds. In fact, what you can build is only limited by your imagination. do the Metaverse and Omniverse work together? Can one exist without the other? We have the answers to all your questions and more.

Bristol scientists develop insect-sized flying robots with flapping wings

Are we to see an evolution of drone designs now?

Researchers at the University of Bristol in the U.K. have designed a flying robot that flaps its wings and can generate more power than a similar-sized insect, which it was inspired from. The robot could pave way for smaller, lighter, and more effective drones, the researchers claimed in an institutional press release.

When it comes to flying robots, researchers have relied largely on propeller-based designs. Even though it is well known that bio-inspired flapping wings are a much more efficient method of flying, replicating them in a flying object has been challenging. As the researchers stated in the press release, the use of motors, gears, and complex transmission systems to achieve the flapping movement adds to the complexity as well as the weight of the entire system, which has many undesired effects. drones are great but not very efficient. Researchers in Bristol may have cracked what it takes to make flapping-wing flying robots.