Consciousness, and the ways in which it can become impaired after certain brain injuries, are not well understood, making disorders of consciousness (DOC), like coma, vegetative states and minimally conscious states difficult to treat. But a new study, published in Nature Neuroscience, indicates that AI might be able to help researchers gain some traction with this problem. The research team involved in the new study has developed an adversarial AI framework to help them determine what exactly is going on in states of reduced consciousness and how to approach a solution.

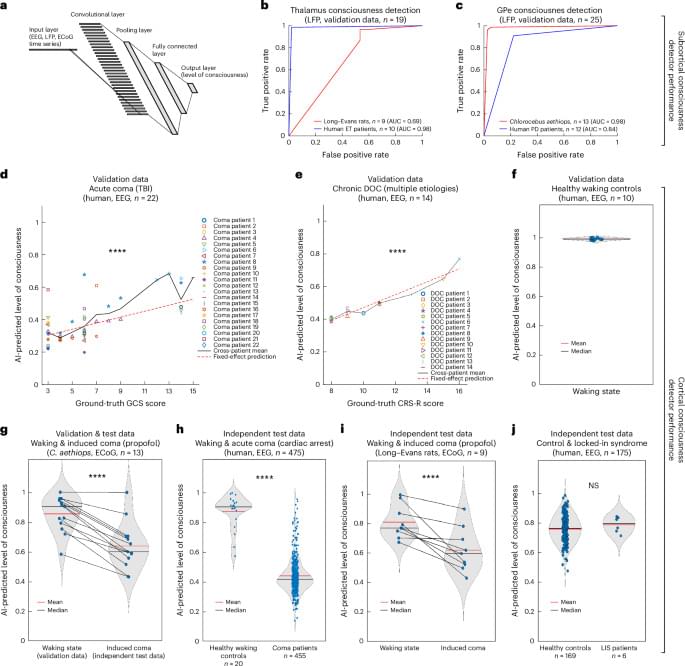

To better understand the mechanisms behind impaired consciousness, the researchers developed two types of AI models and had them play a kind of game where one model determined different levels of consciousness based on EEGs simulated to look like those of real unconscious and conscious brains. The AI agents guessing consciousness levels, called deep convolutional neural networks (DCNNs), were first trained on 680,000 ten-second recordings of brain activity from conscious and unconscious humans, monkeys, bats and rats to detect which neural signals related to differing levels of consciousness. The AI showing EEG data was a biologically plausible simulation of the human brain.

“To decode consciousness from these signals, we trained three separate DCNNs, each specialized for a different brain region, to output a continuous score from 0 (unconscious) to 1 (fully conscious): a cortical consciousness detector (ctx-DCNN), a thalamic consciousness detector (th-DCNN) and a pallidal consciousness detector (pal-DCNN). The ctx-DCNN was trained on continuous consciousness levels derived from clinical scales (GCS and CRS-R), enabling it to recognize graded states of consciousness,” the study authors explain.