Category: robotics/AI – Page 1,669

The Man behind Stable Diffusion

An interview with Emad Mostaque, founder of Stability AI.

OUTLINE:

0:00 — Intro.

1:30 — What is Stability AI?

3:45 — Where does the money come from?

5:20 — Is this the CERN of AI?

6:15 — Who gets access to the resources?

8:00 — What is Stable Diffusion?

11:40 — What if your model produces bad outputs?

14:20 — Do you employ people?

16:35 — Can you prevent the corruption of profit?

19:50 — How can people find you?

22:45 — Final thoughts, let’s destroy PowerPoint.

Links:

Homepage: https://ykilcher.com.

Merch: https://ykilcher.com/merch.

YouTube: https://www.youtube.com/c/yannickilcher.

Twitter: https://twitter.com/ykilcher.

Discord: https://ykilcher.com/discord.

LinkedIn: https://www.linkedin.com/in/ykilcher.

If you want to support me, the best thing to do is to share out the content smile

If you want to support me financially (completely optional and voluntary, but a lot of people have asked for this):

SubscribeStar: https://www.subscribestar.com/yannickilcher.

Patreon: https://www.patreon.com/yannickilcher.

Bitcoin (BTC): bc1q49lsw3q325tr58ygf8sudx2dqfguclvngvy2cq.

Ethereum (ETH): 0x7ad3513E3B8f66799f507Aa7874b1B0eBC7F85e2

Litecoin (LTC): LQW2TRyKYetVC8WjFkhpPhtpbDM4Vw7r9m.

Monero (XMR): 4ACL8AGrEo5hAir8A9CeVrW8pEauWvnp1WnSDZxW7tziCDLhZAGsgzhRQABDnFy8yuM9fWJDviJPHKRjV4FWt19CJZN9D4n

New-and-Improved Content Moderation Tooling

To help developers protect their applications against possible misuse, we are introducing the faster and more accurate Moderation endpoint. This endpoint provides OpenAI API developers with free access to GPT-based classifiers that detect undesired content — an instance of using AI systems to assist with human supervision of these systems. We have also released both a technical paper describing our methodology and the dataset used for evaluation.

When given a text input, the Moderation endpoint assesses whether the content is sexual, hateful, violent, or promotes self-harm — content prohibited by our content policy. The endpoint has been trained to be quick, accurate, and to perform robustly across a range of applications. Importantly, this reduces the chances of products “saying” the wrong thing, even when deployed to users at-scale. As a consequence, AI can unlock benefits in sensitive settings, like education, where it could not otherwise be used with confidence.

“An engine for the imagination”: an interview with David Holz, CEO of AI image generator Midjourney

AI-powered image generators like OpenAI’s DALL-E and Google’s Imagen are just beginning to move into the mainstream. David Holz is the CEO of Midjourney, creators of a popular AI image generator of the same name. In this interview, he explains how the technology works and how it’s going to change the world.

Elon Musk reveals more details about Tesla Robot, sees people gifting it to elderly parents

Tesla CEO Elon Musk has revealed more details about Tesla Optimus, the company’s upcoming humanoid robot, and how he sees the product rolling out over the next decade.

Over the last few years, Musk has been getting quite cozy with the Chinese government.

In a country known for its protectionism, the CEO managed to score for Tesla the first car factory in China wholly owned by a foreign automaker.

The Future of AI-Generated Art Is Here: An Interview With Jordan Tanner

Unlike many of the so-called “artists” strewing junk around the halls of modern art museums, Jordan Tanner is actually pushing the frontiers of his craft. His eclectic portfolio includes vaporwave-inspired VR experiences, NFTs & 3D-printed figurines for Popular Front, and animated art for this very magazine. His recent AI-generated art made using OpenAI’s DALL-E software was called “STUNNING” by Lee Unkrich, the director of Coco and Toy Story 3.

We interviewed the UK-born, Israel-based artist about the imminent AI-generated art revolution and why all is not lost when it comes to the future of art. In Tanner’s eyes, AI-generated art is similar to having the latest, flashiest Nikon camera—it doesn’t automatically make you a professional photographer. Tanner also created a series of unique, AI-generated pieces for this interview which can be enjoyed below.

Thanks for talking to Countere, Jordan. Can you tell us a little about your background as an artist?

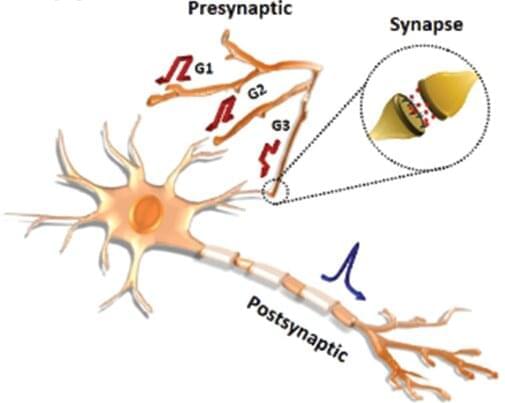

Development of dendritic-network-implementable artificial neurofiber transistors

Advances in artificial-intelligence-based technologies have led to an astronomical increase in the amounts of data available for processing by computers. Existing computing methods often process data sequentially and therefore have large time and power requirements for processing massive quantities of information. Hence, a transition to a new computing paradigm is required to solve such challenging issues. Researchers are currently working towards developing energy-efficient neuromorphic computing technologies and hardware that are capable of processing massive amounts of information by mimicking the structure and mechanisms of the human brain.

The Korea Institute of Science and Technology (KIST) has reported that a research team led by Dr. Jung ah Lim and Dr. Hyunsu Ju of the Center for Opto-electronic Materials and Devices has successfully developed organic neurofiber transistors with an architecture and functions similar to those of neurons in the human brain, which can be used as a neural network. Research on devices that can function as neurons and synapses is needed so that large-scale computations can be performed in a manner similar to data processing in the human brain. Unlike previously developed devices that act as either neurons or synapses, the artificial neurofiber transistors developed at KIST can mimic the behaviors of both neurons and synapses. By connecting the transistors in arrays, one can easily create a structure similar to a neural network.

Biological neurons have fibrous branches that can receive multiple stimuli simultaneously, and signal transmissions are mediated by ion migrations stimulated by electrical signals. The KIST researchers developed the aforementioned artificial neurofibers using fibrous transistors previously developed by them in 2019. They devised memory transistors that remember the strengths of the applied electrical signals, similar to synapses, and transmit them via redox reactions between the semiconductor channels and ions within the insulators upon receiving the electrical stimuli from the neurofiber transistors. These artificial neurofibers also mimic the signal summation functionality of neurons.

A small robot on the ISS will practice performing surgery in space

A surgical tool currently in clinical trials will slice fake flesh in space — providing groundbreaking new tech for space-based medical emergencies.