Geometry may come from navigation skills shared with animals, while human language allows those spatial abilities to become abstract mathematical reasoning.

Consider teaching a computer how to read by giving it billions of books. You don’t teach it grammar rules or logic; you simply ask it to play a game: “Look at these words, and guess what word comes next.” To win this game at a world-class level, the computer can’t just memorize phrases. It has to start figuring out how the world works. If it’s reading a mystery novel, it needs to deduce who the killer is to guess the final sentence. If it’s reading a math textbook, it has to understand addition to predict the answer to a problem. This is the core idea explored in a recent scientific paper titled “Algorithmic Compression via Pretrained Neural Networks.”*The researchers look under the hood of today’s Large Language Models (LLMs)—like the AI assistants we use every day—to explain a fascinating mystery: Why does a machine trained merely to predict the next word end up looking like it can think, reason, and solve complex problems? Think about how a ZIP file works on your computer. If you have a massive text file filled with the word “apple” repeated a million times, a compression program won’t save all million words. It will compress it into a short rule: “Repeat ‘apple’ 1,000,000 times.” It turns a massive mountain of data into a tiny, elegant recipe. (learning how to learn). Because the AI is fed a massive, diverse diet of information, it can’t just memorize everything. Instead, it is forced to find the underlying “recipes” or rules behind the data it sees. When you type a prompt into an AI, it doesn’t just look up an answer in a database. It looks at your text, infers the “generative algorithm” (the underlying pattern or logic of what you are asking), and uses that pattern to compress the problem and generate the correct response. In essence, it deduces the hidden rules of the game on the fly. * Discover Complex Logic: When given a sequence of chess moves, the AI doesn’t just guess random moves; it actually reconstructs the abstract rules and evaluations of a chessboard in its digital “mind.” While this framework helps explain why AI is getting so smart, it also opens up big new questions. We know these models are compressing data and finding rules, but we still don’t fully understand the absolute limits of this approach. How close can a practical AI get to that theoretical “perfect” intelligence? What happens when the AI runs out of human data to learn from?

Vitányi was appointed professor of computer science at the University of Amsterdam, and researcher at the National Research Institute for Mathematics and Computer Science in the Netherlands (CWI, initially Mathematical Centre [MC]) where he is currently a CWI Fellow. He was guest professor at the University of Copenhagen in 1978; research associate at the Massachusetts Institute of Technology in 1985/1986; Gaikoku-Jin Kenkyuin (councilor professor) at INCOCSAT at the Tokyo Institute of Technology in 1998; visiting professor at Boston University in 2004, at Monash University in 1996 and at the National ICT of Australia NICTA at University of New South Wales in 2004/2005; visiting professor at and adjunct professor of computer science at the University of Waterloo from 2005.

Universal nature of structure.

Ontic Structural Realism (OSR) holds that structure is ontologically fundamental, yet it lacks a precise metaphysical account of structure. Returning to the insight that originally motivated structural realism, I develop a new basis for OSR grounded in the metaphysical foundations of mathematics. This approach draws on the principles of ante rem structuralism and their formal axiomatizations to define Structure Theory (ST), the view that structures exist sui generis and constitute the subject matter of mathematics. ST compels OSR to confront its “collapse problem” of distinguishing physical from mathematical structure. I argue for embracing the collapse by adopting the Mathematical Universe Hypothesis (MUH), which identifies our physical universe as an ante rem structure.

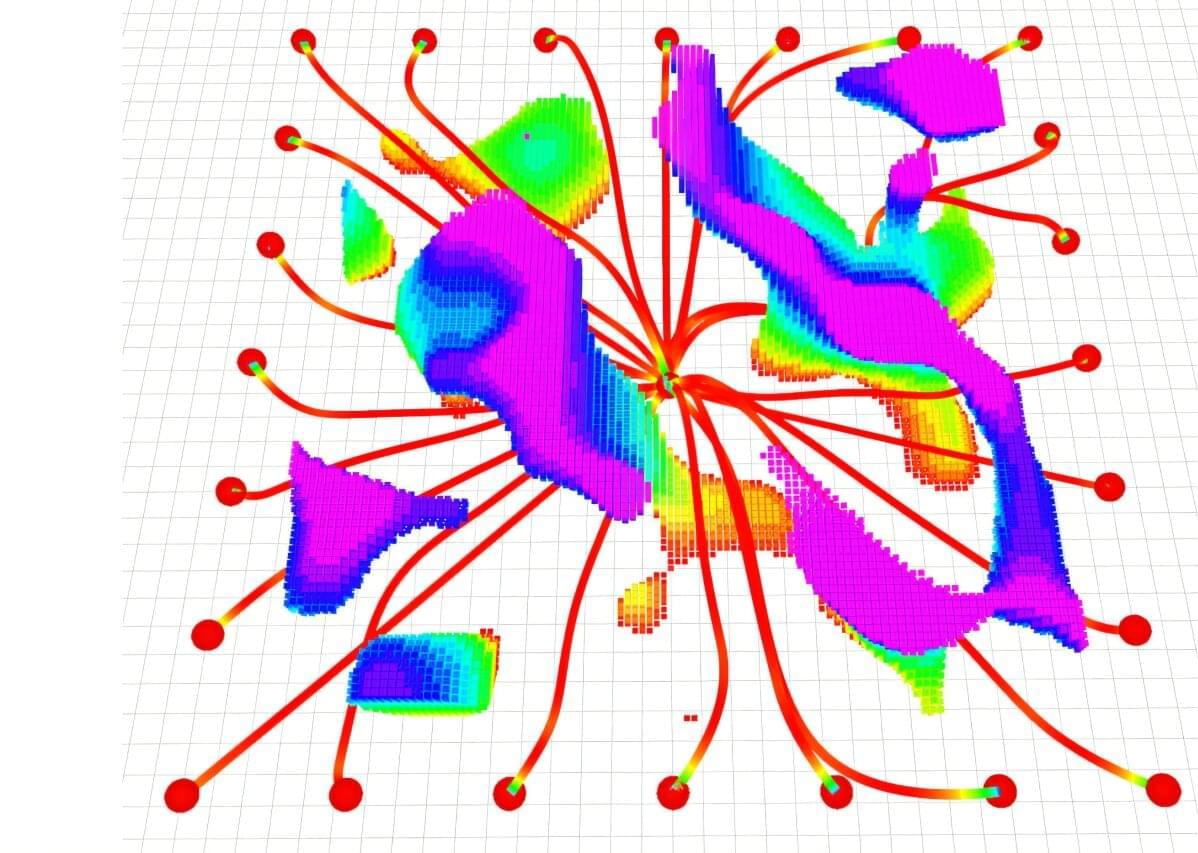

For nearly 80 years, mathematicians have struggled to solve a classic geometry puzzle first posed by Paul Erdős in 1946: the planar unit distance problem. The question posed by the legendary Hungarian mathematician was, on the surface, deceptively simple.

It asks: if you take a piece of paper and add some dots, how many pairs can be exactly the same distance apart? Erdős himself proposed that the maximum number grows only slightly faster than the number of dots. Although many mathematicians agreed with him, no one could find a way to mathematically prove it.

Using a conventional computer and cutting-edge mathematical tools and code, physicists at the Center for Computational Quantum Physics (CCQ) at the Simons Foundation’s Flatiron Institute and collaborators at Boston University have cracked a daunting quantum physics problem previously claimed to be solvable only by quantum computers.

The technique is so groundbreaking in its efficiency that the researchers were even able to use a personal laptop to solve the problem.

By enabling scientists to squeeze extra problem-solving power from classical computers, the breakthrough methodology is opening new avenues for research on quantum dynamics and may be useful as a protocol for solving problems about finding the optimal solution amid an abundance of feasible ones.

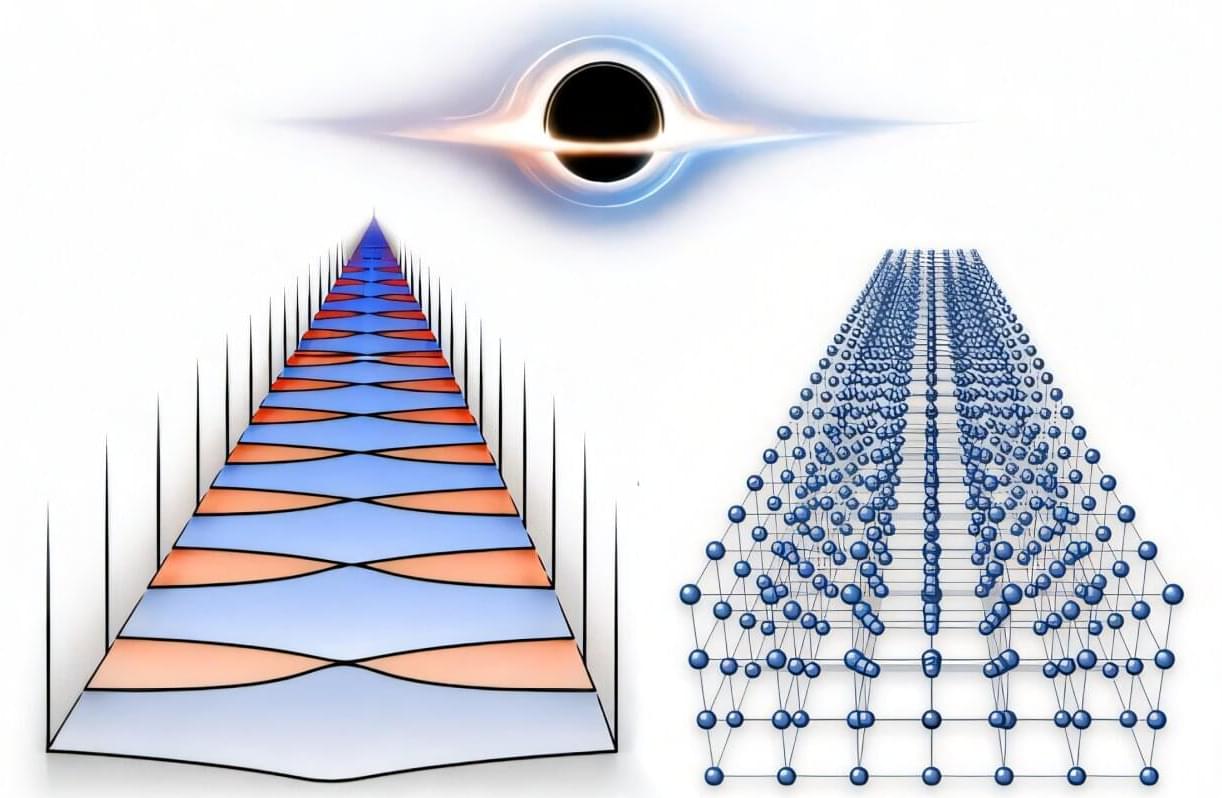

A team from Vienna and Frankfurt has found a formula describing a strange phenomenon: Space and time can form a kind of “crystal” that may turn into a black hole. The results are described in Physical Review Letters.

Alongside the famous gigantic black holes, physics also allows for microscopic versions. They emerge from so-called critical states, when spacetime organizes itself into a regular, crystal-like structure during a process known as critical collapse. A team from Goethe University Frankfurt and TU Wien has now succeeded, for the first time, in describing this phenomenon with an exact mathematical formula using an unusual mathematical trick.

Black holes usually form in spectacular events, such as the death of a massive star. But in theory, arbitrarily small black holes are also possible: tiny microscopic objects that can emerge from special critical states after the slightest addition of energy. Such states may have existed shortly after the Big Bang, when the universe was still a chaotic mixture of particles, potentially giving rise to so-called primordial black holes.

Today, we share a breakthrough on the unit distance problem. Since Erdős’s original work, the prevailing belief has been that the “square grid” constructions depicted further below were essentially optimal for maximizing the number of unit-distance pairs. An internal OpenAI model has disproved this longstanding conjecture, providing an infinite family of examples that yield a polynomial improvement. The proof has been checked by a group of external mathematicians. They have also written a companion paper explaining the argument and providing further background and context for the significance of the result.

The result is also notable for how it was found. The proof came from a new general-purpose reasoning model, rather than from a system trained specifically for mathematics, scaffolded to search through proof strategies, or targeted at the unit distance problem in particular. As part of a broader effort to test whether advanced models can contribute to frontier research, we evaluated it on a collection of Erdős problems. In this case, it produced a proof resolving the open problem.

This proof is an important milestone for the math and AI communities. It marks the first time that a prominent open problem, central to a subfield of mathematics, has been solved autonomously by AI. It also demonstrates the depth of reasoning these systems now support. Mathematics provides a particularly clear testbed for reasoning: the problems are precise, potential proofs can be checked, and a long argument only works if the reasoning holds together from beginning to end. The method by which the problem was solved is also notable. The proof brings unexpected, sophisticated ideas from algebraic number theory to bear on an elementary geometric question.

Lisa Feldman Barrett, Michael Levin and Adam Frank discuss whether science should abandon its materialist framework.

Could a different metaphysics help science to progress further?

With a free trial, you can watch the full debate NOW at https://iai.tv/video/science-beyond-t… centuries, we’ve assumed that science has banished the transcendent and established that reality is entirely physical. But critics argue there are signs that a rigorous materialism might be holding science back. Increasingly, “emergence” is used to account for everything from consciousness to spacetime – a convenient placeholder for what materialist science may be unable to explain. Physicists like Heisenberg and Hawking concluded that science gives us models of reality, rather than final descriptions of its true nature, while there are scientists working in everything from biology to computer science who suggest that dualism is a productive metaphysical framework for their research. Materialism may have enabled science to reach beyond the dogmas of religion, but there are now those who are restlessly probing the limits of materialism itself. Does science need to assume a materialist account of the world or might this have fundamental limitations? Could a different metaphysics help science make progress on key questions, from the origin of life to the mysteries of quantum gravity? Or would abandoning materialism risk returning us to the myths of superstition and religion? #science #materialism #metaphysics Lisa Feldman Barrett is among the most cited scientists in the world for her research on the psychology and neuroscience of emotions. Adam Frank is an astrophysicist who explores the origins of stars, civilizations and consciousness, and is a leading figure in astrobiology and the search for alien life. Michael Levin is a synthetic biologist whose pioneering work in regenerative biology involves building biological robots to probe the nature of life, intelligence and evolution. Güneş Taylor hosts. The Institute of Art and Ideas features videos and articles from cutting edge thinkers discussing the ideas that are shaping the world, from metaphysics to string theory, technology to democracy, aesthetics to genetics. Subscribe today! https://iai.tv/subscribe?utm_source=Y… 0:00 Intro 1:34 Science cannot reveal objective reality 5:28 — History shows that materialism is one of many philosophies of science 8:59 There are some mathematical facts which are discovered, not chosen 12:14 Does materialism prevent mythical and superstitious views of reality? 14:56 There is no 3rd person view of the universe 18:05 Is science truly reproducible? For debates and talks: https://iai.tv For articles: https://iai.tv/articles For courses: https://iai.tv/iai-academy/courses.

For centuries, we’ve assumed that science has banished the transcendent and established that reality is entirely physical. But critics argue there are signs that a rigorous materialism might be holding science back. Increasingly, “emergence” is used to account for everything from consciousness to spacetime – a convenient placeholder for what materialist science may be unable to explain. Physicists like Heisenberg and Hawking concluded that science gives us models of reality, rather than final descriptions of its true nature, while there are scientists working in everything from biology to computer science who suggest that dualism is a productive metaphysical framework for their research. Materialism may have enabled science to reach beyond the dogmas of religion, but there are now those who are restlessly probing the limits of materialism itself.

Does science need to assume a materialist account of the world or might this have fundamental limitations? Could a different metaphysics help science make progress on key questions, from the origin of life to the mysteries of quantum gravity? Or would abandoning materialism risk returning us to the myths of superstition and religion?

#science #materialism #metaphysics.

Ultimately, QIML proves that we don’t need a fully fault-tolerant quantum computer to see results. By using quantum processors to learn the complex “rules” of chaos, we can give classical computers the boost they need to make reliable, long-term predictions about the most turbulent environments in the natural world.

Modeling high-dimensional dynamical systems remains one of the most persistent challenges in computational science. Partial differential equations (PDEs) provide the mathematical backbone for describing a wide range of nonlinear, spatiotemporal processes across scientific and engineering domains (1–3). However, high-dimensional systems are notoriously sensitive to initial conditions and the floating-point numbers used to compute them (4–7), making it highly challenging to extract stable, predictive models from data. Modern machine learning (ML) techniques often struggle in this regime: While they may fit short-term trajectories, they fail to learn the invariant statistical properties that govern long-term system behavior. These challenges are compounded in high-dimensional settings, where data are highly nonlinear and contain complex multiscale spatiotemporal correlations.

ML has seen transformative success in domains such as large language models (8, 9), computer vision (10, 11), and weather forecasting (12–15), and it is increasingly being adopted in scientific disciplines under the umbrella of scientific ML (16). In fluid mechanics, in particular, ML has been used to model complex flow phenomena, including wall modeling (17, 18), subgrid-scale turbulence (19, 20), and direct flow field generation (21, 22). Physics-informed neural networks (23, 24) attempt to inject domain knowledge into the learning process, yet even these models struggle with the long-term stability and generalization issues that high-dimensional dynamical systems demand. To address this, generative models such as generative adversarial networks (25) and operator-learning architectures such as DeepONet (26) and Fourier neural operators (FNO) (27) have been proposed. While neural operators offer discretization invariance and strong representational power for PDE-based systems, they still suffer from error accumulation and prediction divergence over long horizons, particularly in turbulent and other chaotic regimes (28, 29). Recent work, such as DySLIM (30), enhances stability by leveraging invariant statistical measures. However, these methods depend on estimating such measures from trajectory samples, which can be computationally intensive and inaccurate in all forms of chaotic systems, especially in high-dimensional cases. These limitations have prompted exploration into alternative computational paradigms. Quantum machine learning (QML) has emerged as a possible candidate due to its ability to represent and manipulate high-dimensional probability distributions in Hilbert space (31). Quantum circuits can exploit entanglement and interference to express rich, nonlocal statistical dependencies using fewer parameters than their promising counterparts, which makes them well suited for capturing invariant measures in high-dimensional dynamical systems, where long-range correlations and multimodal distributions frequently arise (32). QML and quantum-inspired ML have already demonstrated potential in fields such as quantum chemistry (33, 34), combinatorial optimization (35, 36), and generative modeling (37, 38). However, the field is constrained on two fronts: Fully quantum approaches are limited by noisy intermediate-scale quantum (NISQ) hardware noise and scalability (39), while quantum-inspired algorithms, being classical simulations, cannot natively leverage crucial quantum effects such as entanglement to efficiently represent the complex, nonlocal correlations found in such systems. These challenges limit the standalone utility of QML in scientific applications today. Instead, hybrid quantum-classical models provide a promising compromise, where quantum submodules work together with classical learning pipelines to improve expressivity, data efficiency, and physical fidelity. In quantum chemistry, this hybrid paradigm has proven feasible, notably through quantum mechanical/molecular mechanical coupling (40, 41), where classical force fields are augmented with quantum corrections. Within such frameworks, techniques such as quantum-selected configuration interaction (42) have been used to enhance accuracy while keeping the quantum resource requirements tractable. In the broader landscape of quantum computational fluid dynamics, progress has been made toward developing full quantum solvers for nonlinear PDEs. Recent works by Liu et al. (43) and Sanavio et al. (44, 45) have successfully applied Carleman linearization to the lattice Boltzmann equation, offering a promising pathway for simulating fluid flows at moderate Reynolds numbers. These approaches, typically using algorithms such as Harrow-Hassidim-Lloyd (HHL) (46), promise exponential speedups but generally necessitate deep circuits and fault-tolerant hardware.

Quantum-enhanced machine learning (QEML) combines the representational richness of quantum models with the scalability of classical learning. By leveraging uniquely quantum properties such as superposition and entanglement, QEML can explore richer feature spaces and capture complex correlations that are challenging for purely classical models. Recent successes in quantum-enhanced drug discovery (37), where hybrid quantum-classical generative models have produced experimentally validated candidates rivaling state-of-the-art classical methods, demonstrate the practical potential of QEML even before full quantum advantage is achieved. Despite these strengths, practical barriers remain. QEML pipelines require repeated quantum-classical communication during training and rely on costly quantum data-embedding and measurement steps, which slow computation and limit accessibility across research institutions.

In the aftermath of a devastating earthquake, unpiloted aerial vehicles (UAVs) could fly through a collapsed building to map the scene, giving rescuers information they need to quickly reach survivors. But this remains an extremely challenging problem for an autonomous robot, which would need to swiftly adjust its trajectory to avoid sudden obstacles while staying on course.

Researchers from MIT and the University of Pennsylvania developed a new trajectory-planning system that tackles both challenges at once. Their technique enables a UAV to react to obstacles in milliseconds while staying on a smooth flight path that minimizes travel time.

Their system uses a new mathematical formulation that ensures the robot travels safely to its destination along a feasible path, and that is less computationally intensive than other techniques. In this way, it generates smoother trajectories faster than state-of-the-art methods.