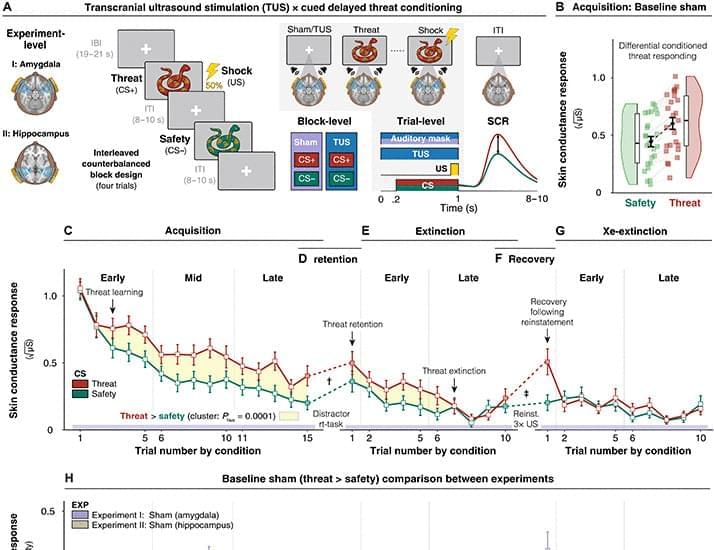

Ultrasonic neuromodulation of the human amygdala provides causal evidence for its role in forming persistent threat memories.

👉 Special Offer for the first PhD-worthy AI: use discount Code SABINE20 at http://jenni.ai/?utm_source=youtube&u…

The Fermi Paradox is the question of why we haven’t been contacted by any extraterrestrial species. In a recent paper, astrophysicists analyzed the paradox by instead examining how civilizations with the ability to send signals through space might develop. Unfortunately for us, their findings are quite bleak – but let’s take a look anyway.

Paper: https://arxiv.org/abs/2602.

👕T-shirts, mugs, posters and more: ➜ https://sabines-store.dashery.com/

💌 Support me on Donorbox ➜ https://donorbox.org/swtg.

👉 Transcript with links to references on Patreon ➜ / sabine.

📝 Transcripts and written news on Substack ➜ https://sciencewtg.substack.com/

📩 Free weekly science newsletter ➜ https://sabinehossenfelder.com/newsle… Audio only podcast ➜ https://open.spotify.com/show/0MkNfXl… 🔗 Join this channel to get access to perks ➜ / @sabinehossenfelder 📚 Buy my book ➜ https://amzn.to/3HSAWJW #science #sciencenews #aliens #astrophysics This video discusses the Fermi Paradox, questioning the absence of extraterrestrial life despite the vastness of the cosmos. The Milky Way has had billions of years to produce civilizations, so where is everybody? A new paper’s analysis suggests a concerning conclusion regarding this silence, prompting us to consider what the lack of alien life tells us about our universe. 🔭

👂 Audio only podcast ➜ https://open.spotify.com/show/0MkNfXl…

🔗 Join this channel to get access to perks ➜

/ @sabinehossenfelder.

📚 Buy my book ➜ https://amzn.to/3HSAWJW

#science #sciencenews #aliens #astrophysics.

This video discusses the Fermi Paradox, questioning the absence of extraterrestrial life despite the vastness of the cosmos. The Milky Way has had billions of years to produce civilizations, so where is everybody? A new paper’s analysis suggests a concerning conclusion regarding this silence, prompting us to consider what the lack of alien life tells us about our universe. 🔭

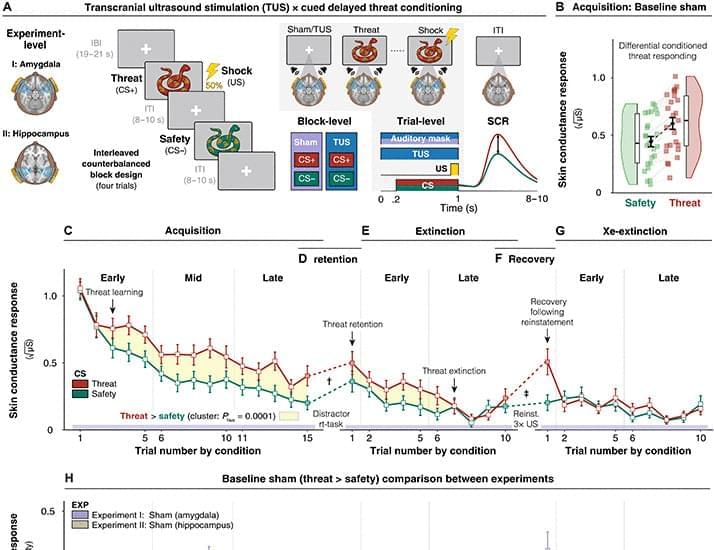

Every thought, memory, and feeling we experience depends on trillions of tiny connection points in the brain called synapses. These are the junctions where one neuron passes signals to another, forming the vast communication network known as the connectome—the brain’s wiring diagram. Although scientists have developed powerful tools to increase or decrease neural activity, directly redesigning the brain’s physical wiring has remained far more difficult.

A research team has now developed a molecular tool that makes such structural editing possible. The new platform, called SynTrogo (Synthetic Trogocytosis), enables researchers to induce astrocytes to selectively remodel synaptic connections in a targeted brain circuit.

The system works like a molecular lock-and-key mechanism. Neurons in the target circuit are engineered to display a molecular “tag” on their surface (a lock), while nearby astrocytes are engineered with a matching binding partner (a key). When the two cells come into contact, the astrocyte is induced to “nibble” part of the neuronal membrane and nearby synaptic material through a trogocytosis-like process—a form of partial cellular uptake seen in several biological systems. By harnessing this process synthetically, the researchers created a way to selectively reduce synaptic connectivity in a defined neural circuit.

The team then asked whether these cellular changes translated into behavioral effects. In contextual fear-conditioning experiments, mice with SynTrogo-modified hippocampal circuits showed stronger memory than control animals. They displayed enhanced recall both two days after learning and 23 days later, indicating improvements in both recent and remote memory. Importantly, these mice also remained capable of extinction learning—the process by which previously learned fear responses are reduced when they are no longer appropriate—suggesting that SynTrogo strengthened memory without sacrificing cognitive flexibility.

Further analysis suggested that SynTrogo may place synapses into a more plastic, learning-ready state. Before learning, AMPA receptor-mediated synaptic responses were reduced, but after fear conditioning they recovered to control-like levels. This implies that the remodeled circuit may be particularly poised for experience-dependent strengthening when new learning occurs.

We are already gene editing humans. You just haven’t noticed.

George Church, Harvard geneticist and Human Genome Project pioneer, explains why CRISPR wasn’t the real breakthrough, how multiplex gene editing unlocked organ transplants and de-extinction, and why aging will likely require rewriting many genes at once.

Hosted by Mgoes → https://twitter.com/m_goes_distance

Brought to you by SuperHuman Fund → https://superhuman.fund/

0:00 — Gene Editing Mammals → Humans

8:36 — Germline vs Somatic

14:56 — Modified Humans Are Already Here

18:50 — Enhancing Healthy Humans

25:00 — Aging Therapies vs Cognitive Enhancement

30:20 — Embryo Selection

38:10 — Is US Losing To UAE?

42:33 — Biotech Failures

49:31 — Next Dire Wolf Moment

54:21 — AI x Science

1:02:07 — Synthetizing Entire Genomes.

The Accelerate Bio Podcast explores the future of humanity in the age of Artificial Intelligence. Subscribe for deep-dive conversations with founders, scientists, and investors shaping AI, biotechnology, and human progress.

This episode discusses George Church, gene editing, CRISPR, human enhancement, longevity, aging, embryo selection, synthetic biology, multiplex editing, AI biotech.

This episode was filmed at the 2026 Abundance360 Summit.

This interview explores the groundbreaking work of Colossal in synthetic biology, de-extinction, and AI integration. Colossal CEO Ben Lamm explains how the company is revolutionizing biodiversity preservation, tackling plastic pollution, and creating living products with immense potential.

Get access to metatrends 10+ years before anyone else — https://qr.diamandis.com/metatrends.

Ben Lamm is Co-founder and CEO of Colossal Biosciences

Peter H. Diamandis, MD, is the Founder of XPRIZE, Singularity University, ZeroG, and A360.

Chapters:

Across Earth, every night, thousands of automated stargazers are waiting to take pictures of shooting stars. I am one of the scientists who study these meteors.

Most movies and news alerts focus on large asteroids that could destroy Earth. And your phone notifies you every few months that an object nine washing machines wide is going to just narrowly skim past. However, the small dust and rubble that enter our atmosphere daily tell an equally interesting story.

My planetary science colleagues and I use camera observations of the night sky to better understand dust, car-sized asteroids and debris from comets in our solar system.

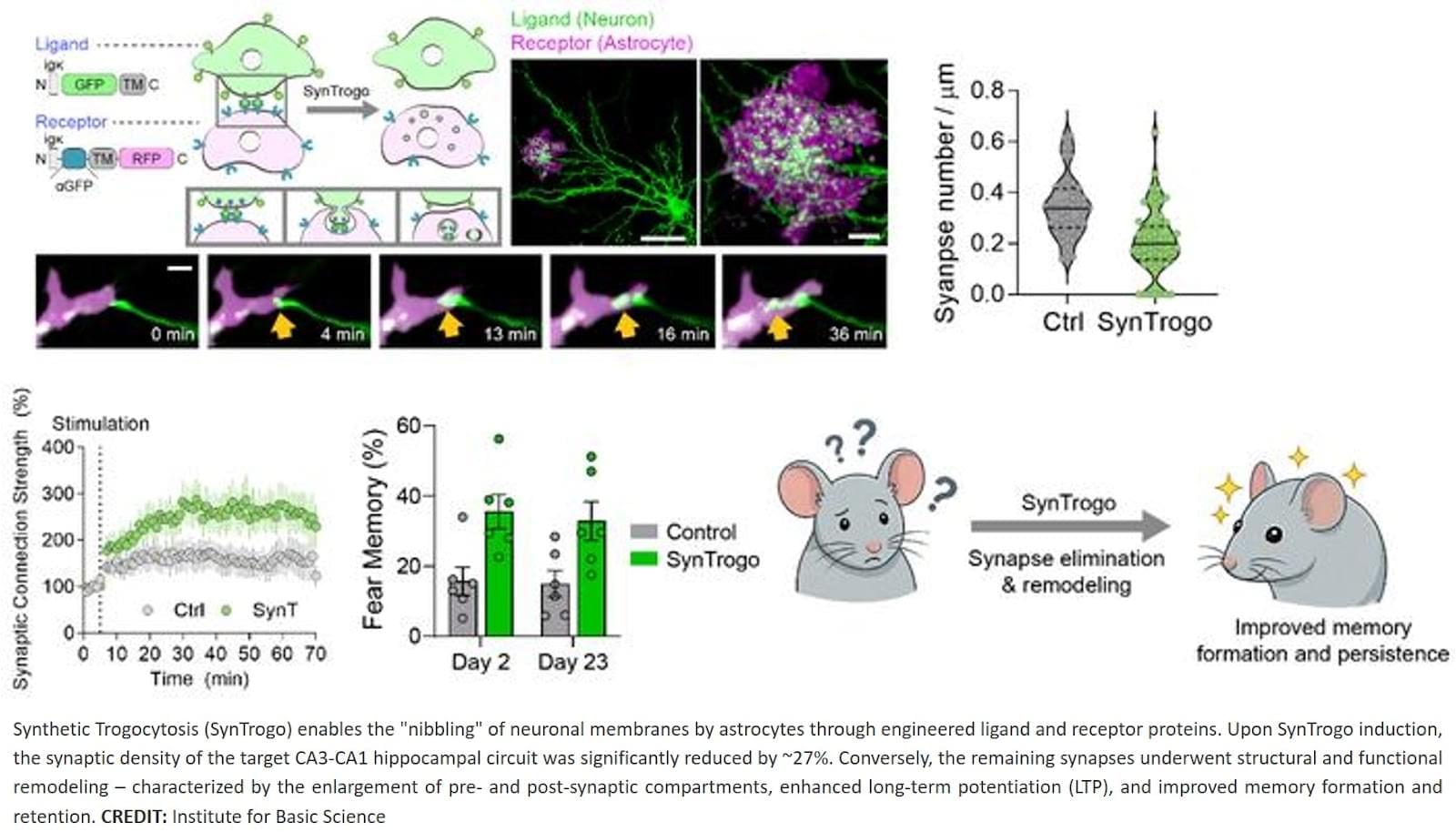

Physicists in China have simulated the effect of “false vacuum decay”: a phenomenon believed to play out constantly in the seemingly empty expanses of space, and which one theory even suggests could bring an abrupt end to the entire universe. In a paper published in Physical Review Letters, Yu-Xin Chao and colleagues at Tsinghua University, Beijing, mimicked the effect using a simple tabletop experiment.

For now, quantum field theory is our most accurate framework for fundamental physics below the scale at which gravity becomes important. It predicts that there is no such thing as a perfect vacuum: while a given space may appear entirely empty, the theory suggests that it is actually just the lowest-energy state of a continuous quantum field.

Since a quantum field can possess multiple local minima energy, this means that a seemingly stable local ground state may not be the most stable state possible for the field as a whole—it is simply separated from a lower-energy, more stable state by an energy barrier, much as a valley may be separated from a deeper valley by a high mountain ridge.

We are already gene editing humans. You just haven’t noticed.

George Church, Harvard geneticist and Human Genome Project pioneer, explains why CRISPR wasn’t the real breakthrough, how multiplex gene editing unlocked organ transplants and de-extinction, and why aging will likely require rewriting many genes at once.

Hosted by Mgoes → https://twitter.com/m_goes_distance

Brought to you by SuperHuman Fund → https://superhuman.fund/

0:00 — Gene Editing Mammals → Humans

8:36 — Germline vs Somatic

14:56 — Modified Humans Are Already Here

18:50 — Enhancing Healthy Humans

25:00 — Aging Therapies vs Cognitive Enhancement

30:20 — Embryo Selection

38:10 — Is US Losing To UAE?

42:33 — Biotech Failures

49:31 — Next Dire Wolf Moment

54:21 — AI x Science

1:02:07 — Synthetizing Entire Genomes.

The Accelerate Bio Podcast explores the future of humanity in the age of Artificial Intelligence. Subscribe for deep-dive conversations with founders, scientists, and investors shaping AI, biotechnology, and human progress.

This episode discusses George Church, gene editing, CRISPR, human enhancement, longevity, aging, embryo selection, synthetic biology, multiplex editing, AI biotech.

Do you believe alien life could be completely unlike anything we’ve ever imagined? In this Science Documentary, we explore forms of life that may not need light, oxygen, or even a recognizable body—glowing through chemistry, drifting like gel in endless darkness, or existing as silent, stone-like structures. This Science Documentary follows the latest discoveries as telescopes probe distant worlds for signs of life. And closer to home, beneath thick ice, hidden oceans may already hold the first alien organisms humanity could reach. Join this Science Documentary as we challenge everything we think life should be.

1:04 The Nearest Life – Europa

4:30 Ocean Worlds – Life Without Light

8:30 Tidally Locked Worlds

12:41 Life in the Atmosphere – Creatures That Never Touch the Ground

15:23 Extreme Gravity – When the Shape of Life Is Rewritten by an Invisible Force

19:11 Non-Carbon Life – When Biology Moves Beyond Our Definition

23:04 The Fermi Paradox – If They Are Everywhere… Why Do We See No One?

26:37 Conclusion.

Welcome to WUFO, your space documentary channel dedicated to both education and entertainment.

WUFO explores the outer reaches of space, the craziness of astrophysics, the possibilities of sci-fi, and anything else you can think of beyond Planet Earth.

Each video space documentary is crafted to inspire curiosity, bring scientific knowledge to life, and make learning about space exciting and enjoyable.

Whether you’re passionate about astronomy, planetary science, or simply love exploring the cosmos, WUFO channel offers engaging journeys that expand your mind and spark your imagination.

Watch more science videos here: • Space Documentary

Moon Documentary: • Moon Documentary — WUFO

Make sure to subscribe and turn on notifications and don’t miss out WUFO — Space Documentary!

🚨 THE UNIVERSE NEVER FORGETS. NOT A SINGLE MOMENT. You burned a book. The words are gone. The pages are ash. But physics says every letter still exists — scattered across trillions of particles, encoded in the quantum state of reality. And it’s not just books. Every breath you’ve ever taken. Every word you’ve ever spoken. Every person you’ve ever lost. The information is still here. Right now. Permanently.

🔴 WHAT YOU’LL DISCOVER:

🔴 Why burning something doesn’t destroy its information.

🔴 How Stephen Hawking lost the biggest bet in physics history.

🔴 The black hole war that nearly broke quantum mechanics.

🔴 Why spacetime itself is made of information.

🔴 What this means about death — and why nothing truly disappears.

⚠️ WARNING: After this video, you will never look at destruction the same way again.

Like and subscribe for more reality-breaking physics.

physics, quantum mechanics, information paradox, black holes, Hawking radiation, holographic principle, entropy, universe, science, reality, quantum information, spacetime, Leonard Susskind, Stephen Hawking, ER EPR.