In the vicissitudes of life, our recent and living generations moved from the hard times of a hundred years ago to the exponential good times of today. Now a few hundred key pioneers have positioned the world in front of the opportunities of Transhumanism and its main tenet, indefinite life extension. Will we unite the world on these issues and capitalize or waste it and let the weeds reclaim our “wheel”, the magnum opus of our generations? I challenge all would-be leaders and followers to honor our ancestors’ long tradition of pioneering the next stages of our future. Everything about you was crafted and honed for this and there is no other time. Find the blazers of our emerging values and paths, your philosophers of the future, out there at the forefronts on this epic new transhuman voyage of freedoms and discoveries and follow them. All leaders who haven’t already, I implore you to fully embrace your roles, triple down and raise your flags even higher. Friedrich Nietzsche wrote a book preluding this philosophy of the future, which serves as the structure for this paper and is quoted here throughout.

“[Conditioning to hard times] is thus established, unaffected by the vicissitudes of generations; the constant struggle with uniform unfavourable conditions is, as already remarked, the cause of a type becoming stable and hard. Finally, however, a happy state of things results, the enormous tension is relaxed; there are perhaps no more enemies among the neighbouring peoples, and the means of life, even of the enjoyment of life, are present in superabundance. With one stroke the bond and constraint of the old discipline severs: it is no longer regarded as necessary, as a condition of existence—if it would continue, it can only do so as a form of luxury, as an archaizing taste. Variations, whether they be deviations (into the higher, finer, and rarer), or deteriorations and monstrosities, appear suddenly on the scene in the greatest exuberance and splendour; the individual dares to be individual and detach himself. At this turning-point of history there manifest themselves, side by side, and often mixed and entangled together, a magnificent, manifold, virgin-forest-like up-growth and up-striving, a kind of tropical tempo in the rivalry of growth, and an extraordinary decay and self-destruction, owing to the savagely opposing and seemingly exploding aptitudes, which strive with one another ‘for sun and light,’ and can no longer assign any limit, restraint, or forbearance for themselves by means of the hitherto existing morality. It was this morality itself which piled up the strength so enormously, which bent the bow in so threatening a manner:—it is now ‘out of date,’ it is getting ‘out of date.’ ” – Friedrich Nietzsche, Beyond Good and Evil: Prelude to a Philosophy of the Future

Our elders came from the great depression and world war. Then they had to watch what they called “morals”, but which were actually just coping mechanisms particular to their vicissitude of time, as Nietzsche gets at in various places, become increasingly disregarded. That happened faster than ever because, little did they know, the bell curve of exponential advancements in fields across the board were upon them. The variations of excellence and monstrosities proliferated like no other time and were supercharged for an abundant harvest by the buds of enlightenment and technology that had been poking their heads out of the fertile intellectual fields of civilization from the smatterings of good times they were able to come upon throughout the century. A lot of it was stored as compounding action potential. It went off like rifles in the 50s and 60s, with so much force that the bullets are still flying today, and the shots of individual aptitude have been firing ever since. Like he is saying, it’s a jungle of individual morals competing in the survival of the fittest, so you must find ways, that hard times naturally make, to get all these independent construction workers of the best ideas behind the same projects in order to tap that energy for the big stages and human potentials.

This is our window in time here, as I often say, to get projects like life extension, transhumanism, space exploration, and some other things done. The people of the past didn’t have this opportunity and the chance here isn’t available forever because death will close us off from it or bad times will set back in. A great gate in Plato’s cave has opened, the eternal guard lions of death have left their posts and we don’t know how long until they come back or the gate closes. It is devastating watching those who have been hypnotized by the cave, by the death trance, sitting there with a wide-open door and the clock ticking down. The climb must be made, now is the time, there is no other. Team up and follow the leaders on these new emerging circumstances and moral imperatives or everyone will die as the marvels of space and boundless technology tumble from our hands. We rouse them to action slowly but surely, though all as one, more gets done.

It is not difficult for Transhumanists or the masses to mistake increased privileges, like so many of the industrialized world have these decades, as chance to secure leisure, pride building, keeping up with the Joneses and so forth. When people start to make good money, do they generally harness all the opportunities they seethed about missing out on when they were poor — the recording studio, the seminars, the investments in life extension that I have heard countless people say they would be back to make when their worldly careers were secured? Many times, we just eat more expensive food, wear more expensive clothes, drive a better car and take more extravagant vacations, wasting the capital gains that were there or dreamed about.

Do hard times need to come back again to allow us to see how incredible the opportunities of this generation and the next “were”, so we can spend our time mourning instead of capitalizing? Or as is happening at present, do we need to let the existential voids gut the bulk of an increasing number of the masses around us until the despair is so great that it forces us back into the undistinguished moral frameworks of the intellectually deflated and delicate, those abasements of our character that grow among the fields of new excellence like weeds?

You see it emerging everywhere, lots of people want it to be a trend not to talk about noble ambitions because it infringes on peoples sacred opinion bubbles, as though open discourse isn’t integral to the human condition and as if those opinion bubbles weren’t arrived at by forms of discourse themselves. Nihilisms everywhere try not only to discourage people from leaving the void but to take up residence in it and work to enrich themselves with absurdity. New kinds of spirituality insist upon random arbitrariness and make it a trend to taboo all dissent. Some in science work to turn what is a mode of investigation into a mechanical way of life. Religion looms there always ready to take advantage of the lost and reclaim its full-time hymn hummers. Television turns gossip and trivialities into an art and trains people in it. Since the world doesn’t have enough leadership yet, or rather, doesn’t recognize enough of it yet, most of the rest of television is reserved for pounding general politics uninspired aimlessness into people’s heads so a few can covertly jockey for power, just to waste that power on screaming into the void from atop piles of gold.

As Nietzsche refers to when talking about politics, and it applies in some ways to the rest of these competing frameworks for wasting time,

”Supposing a statesman were to bring his people into the position of being obliged henceforth to practise ‘high politics,’ for which they were by nature badly endowed and prepared, so that they would have to sacrifice their old and reliable virtues, out of love to a new and doubtful mediocrity;—supposing a statesman were to condemn his people generally to ‘practise politics,’ when they have hitherto had something better to do and think about, and when in the depths of their souls they have been unable to free themselves from a prudent loathing of the restlessness, emptiness, and noisy wranglings of the essentially politics-practising nations;—supposing such a statesman were to stimulate the slumbering passions and avidities of his people, were to make a stigma out of their former diffidence and delight in aloofness, an offence out of their exoticism and hidden permanency, were to depreciate their most radical proclivities, subvert their consciences, make their minds narrow, and their tastes ‘national’—what! a statesman who should do all this, which his people would have to do penance for throughout their whole future, if they had a future, such a statesman would be great, would he?”

The brevity of the portal of opportunity itself must become the crisis. That, along with the worst crisis of all that has always been here ready to propel us to ascending, unifying action if the masses hadn’t tucked tail and ran to hide among rationalizations and excuses, trying vainly to escape it: the 100-year lifespan. More of us must lead on the issue and follow the lead on the issue of the enormous opportunity cost of death and the horrific tragedy of it. People are rightly held to account for excusing death away, but the blame is also ours for not keeping the injustices of death pronounced so that the excuse makers don’t have such a cush and comfortable time construing them. When pro-death culture is rebutted the messages start soaking in for everyone who hears them, and the people who are still forming their worldviews then have the chance to take in substantive versions of all sides of the story. Many people take a side that’s not conducive to life extension simply by default.

Leaders are needed to start the processions of masses on their courses of history, but there are leaders. So, what it is that’s needed is people to start following them. It’s like they say in movements, the first follower is the first leader because they inspire the next 2 to follow, which inspires the next 4 to follow and so forth. What is lacking is enough people courageous enough to set the example and become the first followers.

These are my leaders and they should be yours too: Aubrey de Grey, Michael West, Dave Kekich, Bill Faloon, Kevin Perrott, Joao Pedro de Magalhaes, Natasha Vita-More, Max More, Zoltan Istvan, Keith Comito, Paul Sandford Mcglothin, Ben Goertzel, Bill Andrews, Dave Gobel, Dave Pizer, Michael Rose, Cynthia Kenyon, Gennady Stolyarov, Nikola Danaylov , Brian Kennedy, David Wood, Hank Pellissier, Eric Klien, David Kelley, Ilia Stambler, Roen Horn, Maria Konovalenko, Mikhail Batin, Michael Greve, Steve Hill, Elena Milova, James Hughes, Reason, Velerija Pride, Peter Rothman, Sven Bulterijs, Adam Ford, Nick Bostrom, Ray Kurzweil and at least a hundred others. Every small action taker is a priceless leader as well and every follower leads by example.

We need the media to start portraying our leaders until they can be recognized as such from a distance, when they have large enough crowds of followers clamoring around them. Media: make the people named here regular pundits and guests on the major news networks around the world. Do that and the people will follow, they will create the demands that get these jobs done. Aubrey will go to the head of the NIA and Kennedy to the head of the NIH, Faloon will go to the head of the FDA, Comito will become the Executive Director of CNN, Reason will have a top rated show on ABC, Danaylov on CNN and Al Jazeera, and Wood on the BBC, Magalhaes will become the Director General of the World Health Organization, Kurzweil will become President. A bunch of them will become unofficial advisors and golf buddies of billionaires. They will fill out more of the roles in pop culture from movies and songs to celebrity personalities and cultural references. Many will become Governors and join Congress, become Deans and Chancellors and the world will be ripe for indefinite life extension and all the rest that follows from it. All of us transhumanists must continue manually building these followings until the media fixes better sight of the growing stories that are leading up to things like those and catches up.

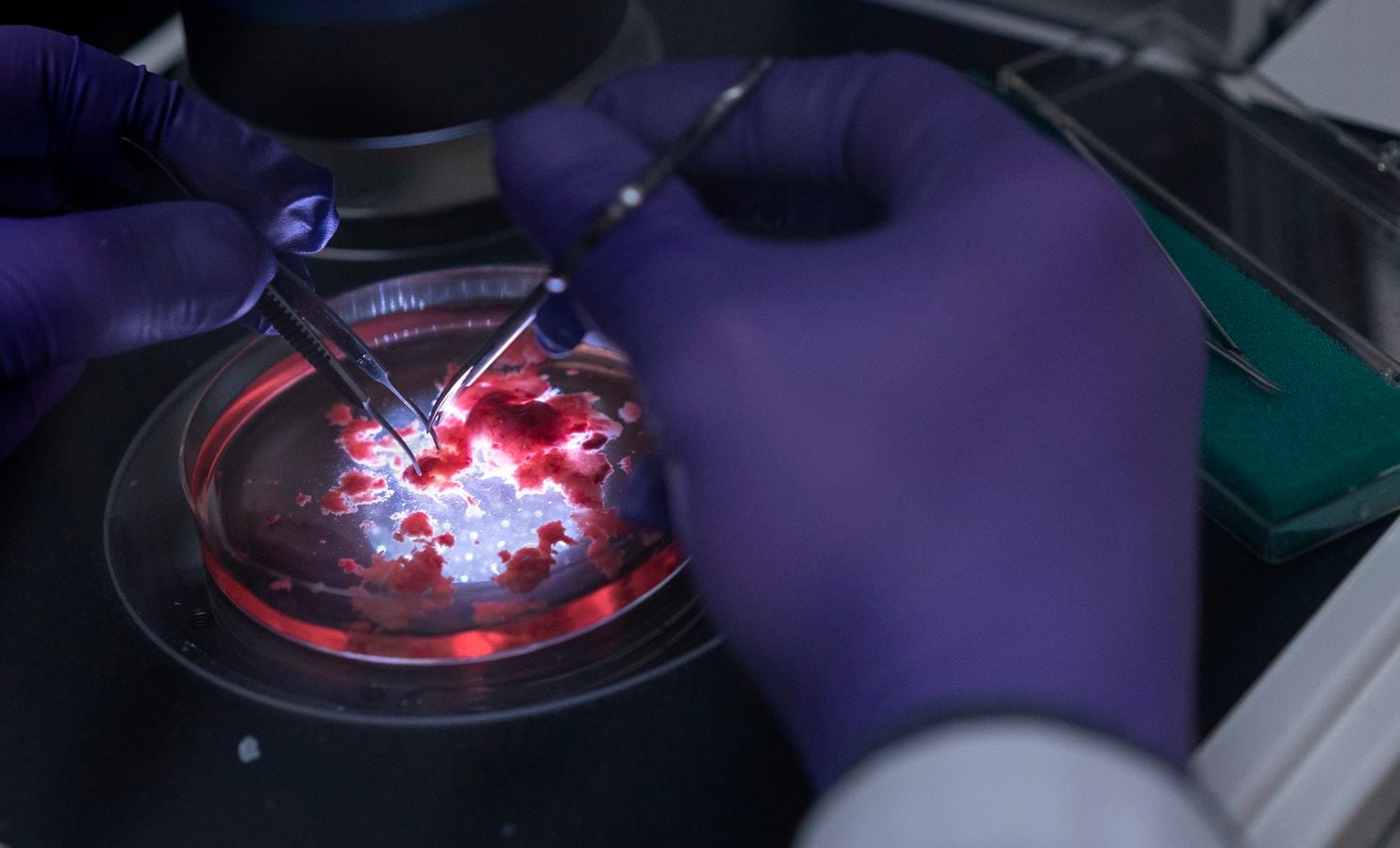

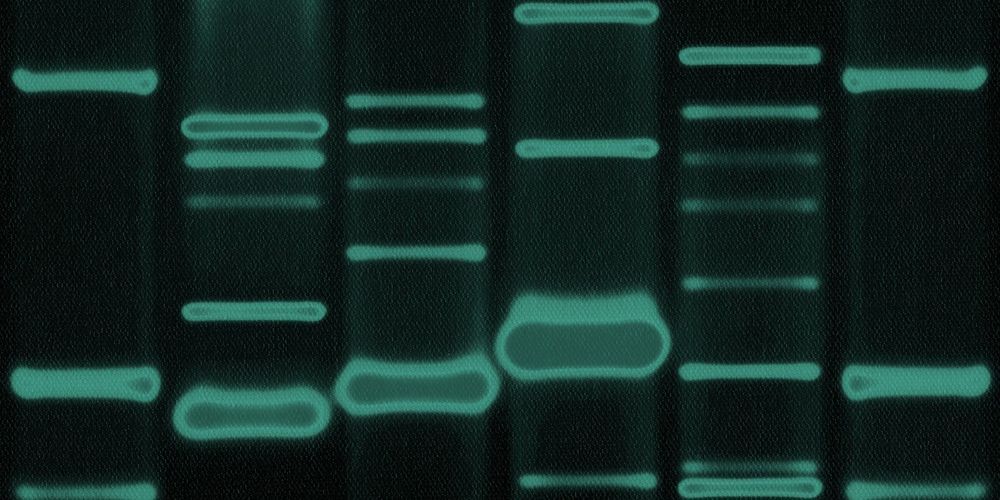

Humanity engineers cells, does more of it and gets better at it every year. With enough resources and focus, we will become master mechanics and precision engineers of the biological clock. Let’s ALL act like we aren’t suicidal and do these things that it takes to get the bustling world industry that supports this built.

In those good times where people’s individual aptitudes increasingly show, Nietzsche says that then,

”Danger is again present, the mother of morality, great danger; this time shifted into the individual, into the neighbour and friend, into the street, into their own child, into their own heart, into all the most personal and secret recesses of their desires and volitions. What will the moral philosophers who appear at this time have to preach? They discover, these sharp onlookers and loafers, that the end is quickly approaching, that everything around them decays and produces decay, that nothing will endure until the day after tomorrow, except one species of man, the incurably mediocre. The mediocre alone have a prospect of continuing and propagating themselves—they will be the men of the future, the sole survivors; ‘ be like them! become mediocre!’ is now the only morality which has still a significance, which still obtains a hearing.—But it is difficult to preach this morality of mediocrity! it can never avow what it is and what it desires! it has to talk of moderation and dignity and duty and brotherly love—it will have difficulty in concealing its irony!”

Nietzsche talks at length about the clever moralists of mediocrity weaving webs of stagnancy. These leaders of mediocrity, the loafers and chicken littles are out there drawing crowds right now. Are you going to let them develop large followings that assimilate the masses to the standpatisms, aimless conventionalities and the evolutionarily flightless bird-like destinations of life that absorb so many now, or are Life Extension and the overall frontier expanding trajectories of Transhumanism the competing evolutionary principles that will win? Will we unite and build the vast armies of pioneers who blaze open the frontiers of Sapiens potential? As Natasha Vita-More writes, “We need a worldwide league of activists and experts to help spread positive news, reliable information, and a well-thought-out socio-political stance.” More heads of life extension organizations and initiatives: take up administration positions at the movement for indefinite life extension page facebook.com/movementforindefinitelifeextension, there are many now and we need you all. Post important things related to life extension there and refer people back to it when the opportunities arise. By getting people to join there, they all join the whole, create solidarity, and a larger show of force, which is crucial in grabbing large-scale attention. We involve increasing percentages of these grassroots supporters from there.

A quarter of a billion people of the world can make these transhuman goals happen and a quarter billion are there to follow if we want them to. Our stance is established in themes and concepts that are written about in papers and books around the cause. I’m writing a key book on the fundamental imperative of indefinite life extension that I have condensed and worked out over the years and suggest that all who join as administrators of the movement for indefinite life extension contribute to a series of books to be called The Movement for Indefinite Life Extension. The admins will meet to develop a list of all foundational stances that we will seek to have covered and make the final decisions on what goes into the series for philosophy, politics, sociology, ethics, history, psychology and so forth. We will also develop one coordinated mass movement for indefinite life extension event to involve the participation of no less than 1,000 people, designed to drive world spotlight to the cause and drive up these “likes’/defacto petition signatures, with no time frame yet set.

When other people try to get transhumanism’s would-be followers to play Duck Duck Goose instead of cosmic chess, they are coming for your future, destiny, place in the history books, all of our lives, your holodeck, our tickets to vacations on other planets and chance to live in a world of superabundance. Things like 3d printing and cheap energy sources can make the entire population of the world richer than the richest Forbes List billionaires of today like the middle class among us today are richer than the richest Kings and Queens of a thousand years ago. Your golden geese circle daffy ducks while the windows close. We can get more people to show up for this than they can for that. Beat them, play to win, you are the alpha thinkers, step into the role, claim your destiny. Who among us is seriously going to follow through with letting these people stomp out the purpose of all our lives? Those are our followers but what is happening is that by leaving them to their own devices, and idle to what’s important, we foster the very conditions that slow us down.

The unique position of this generation, the last and the next is in front of this window of the opportunities of all time and space. Technology can develop far enough and get enough done in time for us. Self-actualization and beyond are ours if we’ll only expedite these goals, if we’ll speed up the process of generating awareness and participation. We know where we’re going, why, and how to get there. Lead by leading or lead by setting the example and following, it’s the same difference, it all gets us there.

“They determine first the Whither and the Why of mankind, and thereby set aside the previous labour of all philosophical workers, and all subjugators of the past—they grasp at the future with a creative hand, and whatever is and was, becomes for them thereby a means, an instrument, and a hammer. Their “knowing” is CREATING, their creating is a law-giving, their will to truth is—WILL TO POWER.—Are there at present such philosophers? Have there ever been such philosophers? MUST there not be such philosophers some day?”

Let’s keep the mediocre at bay by engaging our ethic of the tradition of progress. Stand behind the United Nations Universal Declaration of Human Rights http://www.un.org/en/universal-declaration-human-rights/, the Transhuman Declaration https://humanityplus.org/philosophy/transhumanist-declaration/, the Transhumanist Bill of Rights https://transhumanist-party.org/tbr-2/, and the Technoprogressive Declaration https://transvision-conference.org/tpdec2017/ to make sure there is enough support to signal to the world that this is where we all need to be. Otherwise, the scales of supernaturalism, nihilism, consumerism or some other thing could tip us too far into the abysses of lost potential.

The United Nations Universal Declaration of Human Rights says, for example:

Article 27: “Everyone has the right freely to participate in the cultural life of the community, to enjoy the arts and to share in scientific advancement and its benefits.”

Article 28: “Everyone is entitled to a social and international order in which the rights and freedoms set forth in this Declaration can be fully realized.”

In other words, the world’s goals are no longer to figure out ways to free ourselves from the worst of the struggles, tediums and grunt laboring — now our goals are to make sure everyone does what it takes to implement the achievements that have unlocked those limitations and enable the right of everyone to stand before the fertile fields of our aptitude.

The spirit of that declaration and centuries of progress and evolving mindset that built up to it have helped establish a foundation for transhumanism to gain its footing and flourish, and those are two of its articles that sum up the angle that the Transhuman Declaration, Transhumanist Bill of Rights, and Technoprogressive Declaration expand on. As they outline, the time to focus on adding to our overall condition, or as we call it, “Humanity+”, is here.

Another line in the UN declaration suggests that these conditions and our morality naturally prescribe this transhuman expansion and direction.

Article 29: “Everyone has duties to the community in which alone the free and full development of his personality is possible.”

Developing our species is the overarching reason why you exist, the nature of your reality. We must know what is going on in order to know what we need and want, and that cannot be known until we develop enough to have the chance to do so. New technologies and extended lengths of lifespan are imperative.

The Transhumanist Declaration, the Transhumanist Bill of Rights and the Technoprogressive Declaration declare these kinds of fundamentals of our condition in various ways.

In the Transhumanist Declaration:

“Reduction of existential risks, and development of means for the preservation of life and health, the alleviation of grave suffering, and the improvement of human foresight and wisdom should be pursued as urgent priorities, and heavily funded.”

In the Transhumanist Bill of Rights:

Article IV. “Sentient entities are entitled to universal rights of ending involuntary suffering, making personhood improvements, and achieving an indefinite lifespan via science and technology.”

Article VIII. “Sentient entities are entitled to the freedom to conduct research, experiment, and explore life, science, technology, medicine, and extraterrestrial realms to overcome biological limitations of humanity.”

In the Technoprogressive Declaration:

“Our vision is a sustainable abundance of: clean energy, healthy food, shelter, affordable healthcare, all-round intelligence, mental well-being, and time for creativity, enabled by application of converging technologies, with no one left behind.”

Did the people of 1,000 years ago have opportunities like these? Most of the opportunities they go over weren’t even conceived of yet, not even 100 years ago. Only some of the seeds were then growing deep in the forests of daily awareness. Now the fruits are falling off the trees in groves as far as the eye can see. There has never been a time like this before. Anyone who is considering getting in on this movement, do it now before it’s too late. Just message me and I will personally pitch you indisputable reasoning why you ought to join. Stay tuned to the movement for indefinite life extension post feed for a steady stream of the news, reasoning, philosophy, sociological insights, declarations, opportunities for involvement, updates and everything else.

”The problem of those who wait.—Happy chances are necessary, and many incalculable elements, in order that a higher man in whom the solution of a problem is dormant, may yet take action, or ‘break forth,’ as one might say—at the right moment. On an average it does not happen; and in all corners of the earth there are waiting ones sitting who hardly know to what extent they are waiting, and still less that they wait in vain. Occasionally, too, the waking call comes too late—the chance which gives ‘permission’ to take action—when their best youth, and strength for action have been used up in sitting still; and how many a one, just as he ‘sprang up,’ has found with horror that his limbs are benumbed and his spirits are now too heavy! ‘It is too late,’ he has said to himself—and has become self-distrustful and henceforth forever useless.—In the domain of genius, may not the ‘Raphael without hands’ (taking the expression in its widest sense) perhaps not be the exception, but the rule?—Perhaps genius is by no means so rare: but rather the five hundred hands which it requires in order to tyrannize over the kairos, ‘the right time’—in order to take chance by the forelock!”

We all ride the same orb through the galaxy here, products of the same glorious struggle for survival, progeny of the same springs if ingenuity and imagination, the accomplishments of many triumphant generations worth of “a better tomorrow for our grandchildren”. Life extension, space travel and all the rest aren’t just goals for some particular culture or two, this is for all of us, this is for continuing the tradition of the evolution of our species and the universe.

The Roman Empire never did conquer the North East. Communication was harder back then but let’s say that they had a signup sheet and a newsletter that went out to the whole empire within a minute. Do you think they would have been able to drum up enough support and unity to get it done then? Of course, it would have been simple then. They would have conquered the world. That’s what a Facebook page does for us. It SEEMS too simplistic, but it’s not. If you don’t want the window to close, then just “like” the movement for indefinite life extension page and add and direct people to like the page like your life depends on it. Every aspect of the cause goes out to those “likers”, those defacto petition signers, and increased percentages of them are actively sent off to fitting projects and organizations and involved by us, one on one, via notices and others methods from there. One, solid, unified showing of numbers, social proof, is the only thing standing between you and the train ride to the future.

“the most divergent, the man beyond good and evil, the master of his virtues, and of super-abundance of will; precisely this shall be called GREATNESS: as diversified as can be entire, as ample as can be full.”

Now, on through the window and into the mysteries, in pursuit of the homunculus, to the edges of infinity, through the wonders of imagination, down into the atoms, in search of the purpose of life we go!