It is commonly assumed that tiny particles just go with the flow as they make their way through soil, biological tissue, and other complex materials. But a team of Yale researchers led by Professor Amir Pahlavan shows that even gentle chemical gradients, such as a small change in salt concentration, can dramatically reshape how particles move through porous materials. Their results are published in Science Advances.

How small particles known as colloids, like fine clays, microbes, or engineered particles, move through porous materials such as soil, filters, and biological tissue can have significant and wide-ranging effects on everything from environmental cleanups to agriculture.

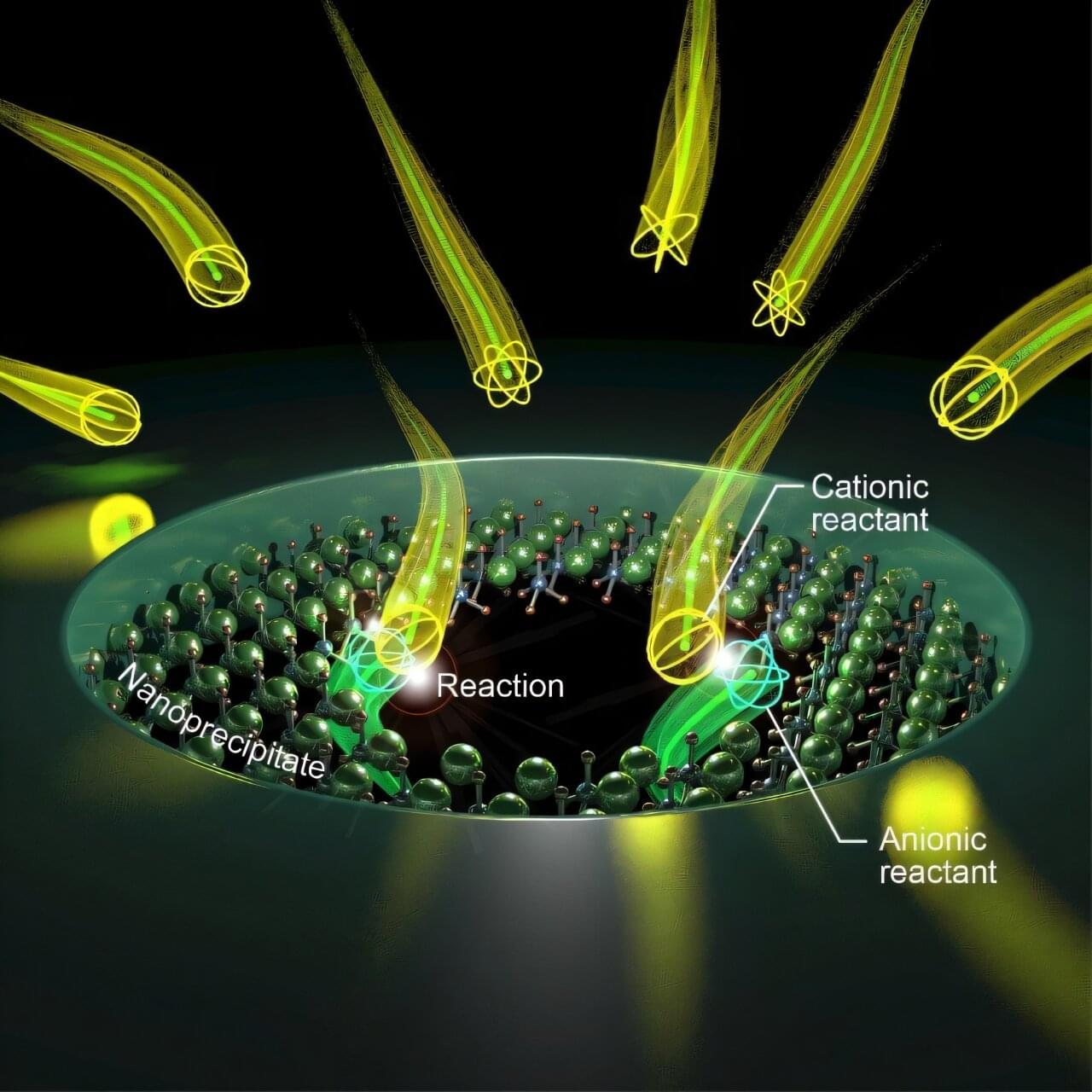

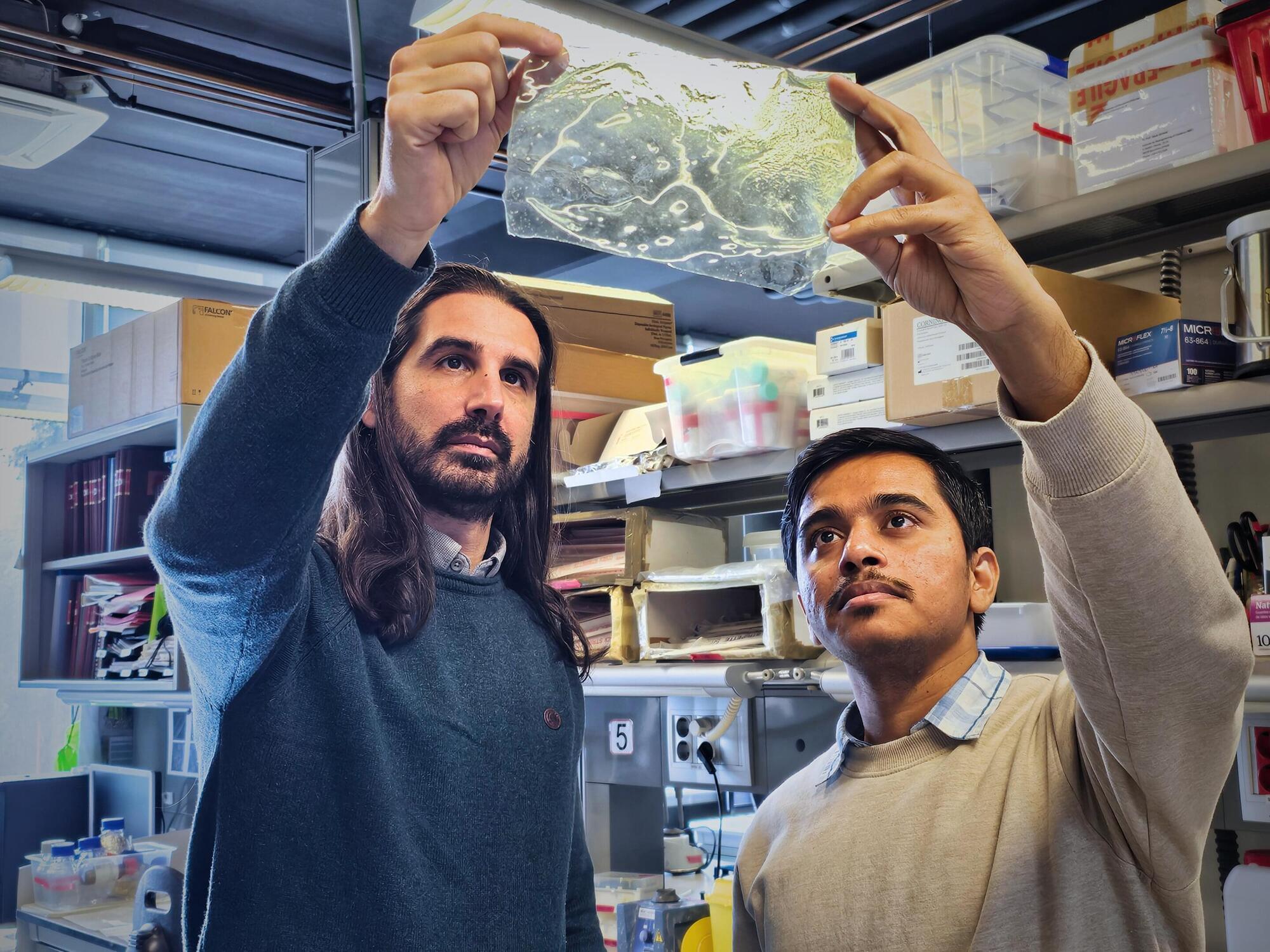

It’s long been known that chemical gradients—that is, gradual changes in the concentration of salt or other chemicals—can drive colloids to migrate directionally, a phenomenon known as diffusiophoresis. But it was often assumed that this effect would matter only when there was little or no flow, because phoretic speeds are typically orders of magnitude smaller than average flow speeds in porous media. Experiments set up in Pahlavan’s lab demonstrated a very different outcome.